Hunters are tired of tool-centric advice and want to think differently. Consider time itself as the dimension to hunt across. Alerts and logs arrive from countless systems and the challenge is connecting events separated by seconds, hours, months, or even years into a single investigative thread. With time, you can shift threat hunting from retroactive to strategic.

Threats don’t respect organizational silos. They leave breadcrumbs across real-time telemetry, short-term storage, and long-term archives. Understand how to navigate these temporal layers and you can transform scattered signals into actionable insights by uncovering long-term patterns. Instead of bouncing between tools and rehydrating datasets, analysts pivot along a timeline to gain insights from different data tiers and inject threat intelligence back into their real-time telemetry.

Let’s explore how to operationalize this temporal approach using Sigma rules-based detections in the pipe and enriching telemetry with threat intelligence. You can enable threat hunts that span your full data landscape, ranging from seconds to years.

Time as a hunting dimension

With time as the primary axis for hunting, we fundamentally change our approach to investigations. Rather than individual tools or data repositories, we pivot along a timeline that spans near-real-time detections, short-term historical logs, and long-term archives.

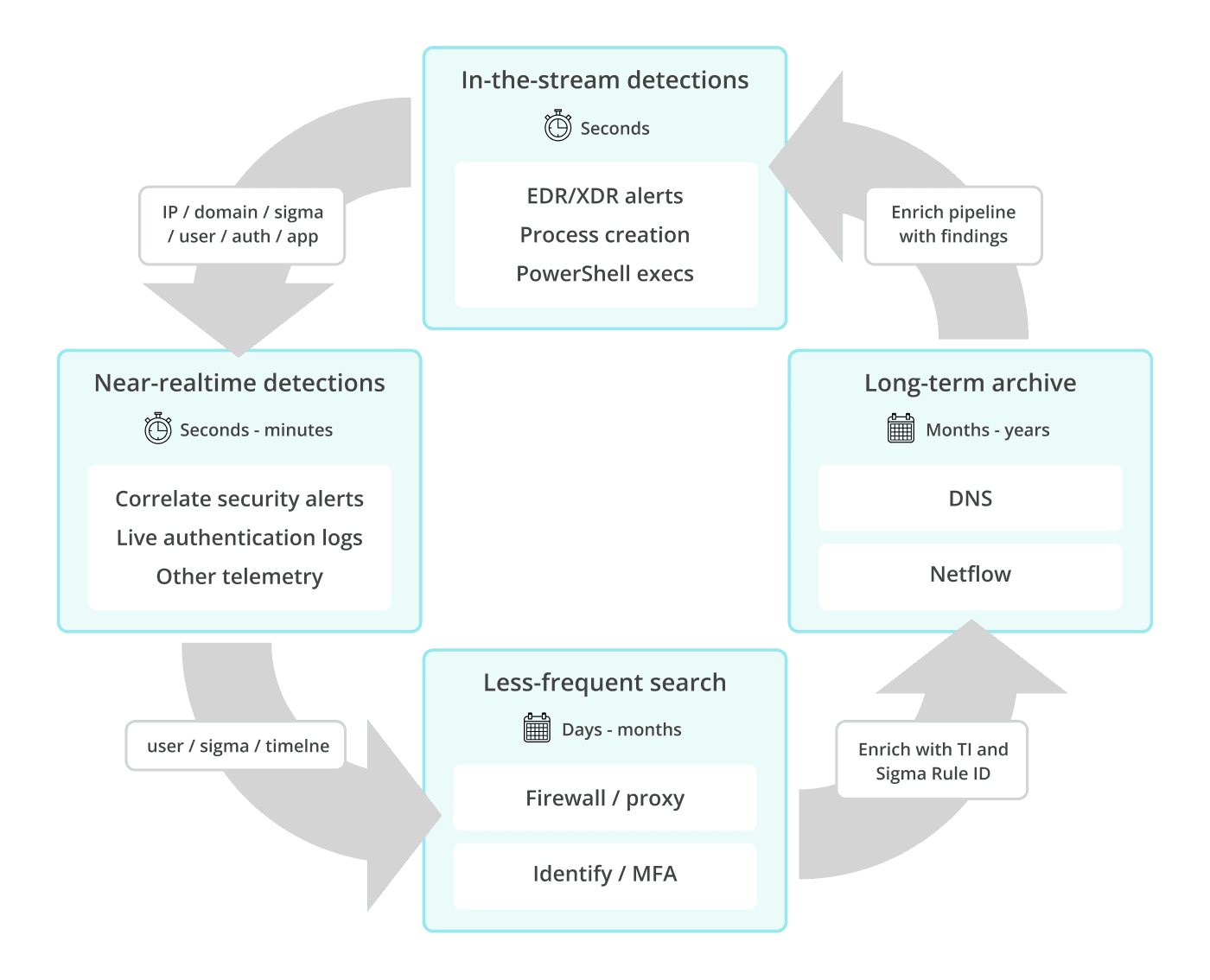

In the workflow concept diagram, below,, analysts begin with the data pipeline, move to hot telemetry, pivot into warm logs for correlation, and finally validate their findings in archives. The arrows represent investigative pivots, not data movement. Each query uses pipeline enrichment, federated searches, and tiered data repositories to access relevant information in place. That expands the threat hunting surface and avoids costly log data restores.

Figure 1. Start in the stream, pivot through hot and cold logs, and tie findings to enrich pipelines and uncover long-dwell threats.

This workflow encourages analysts to think about the sequence of investigative pivots and how enrichment and correlation can occur at every stage. It creates a more complete and actionable threat picture. Each pivot reveals connections that traditional, tool-focused workflows often miss. Your analysts can identify threats before they escalate and implement insights directly into data pipelines so that detections and enrichments automatically benefit future hunts.

Data pipelines as a detection workbench

Pivoting across time tiers efficiently, reliably, and repeatably is vital. This is where data pipelines can become your hunting workbench — a place where raw logs and events are transformed into insights before they even land, seeded with threat intelligence and other context while in transit.

At its core, a telemetry data pipeline is an ordered series of small, composable functions that process every event. Each function can normalize fields, enrich events with threat intelligence, tag matches to Sigma rules, and route data to a purpose-built analytical destination. By embedding this logic into the data flow itself, you ensure consistency across sources and time.

For example, the same Sigma rule that flags a suspicious PowerShell process in near-real-time can also surface the same pattern in historical firewall logs or archived DNS records collected months ago. In this case the goal is to effectively turn raw log events into Sigma rules-based detections, tag them, enrich them, and send them off as an alert when they match. This decreases the time to detect and enables a long-term threat hunting strategy.

Sigma also fits naturally into a pipeline-driven hunting model. Instead of chasing alerts tied to a specific platform, we can tag events once, early in the stream, and let those tags become durable pivots across time, data sources, and investigations. That’s what makes Sigma a hunting primitive, not just another detection format. For a great proof of concept for detections on the wire that are ephemeral and hold state, check out this blog: Stop SIEM’ing Slow.

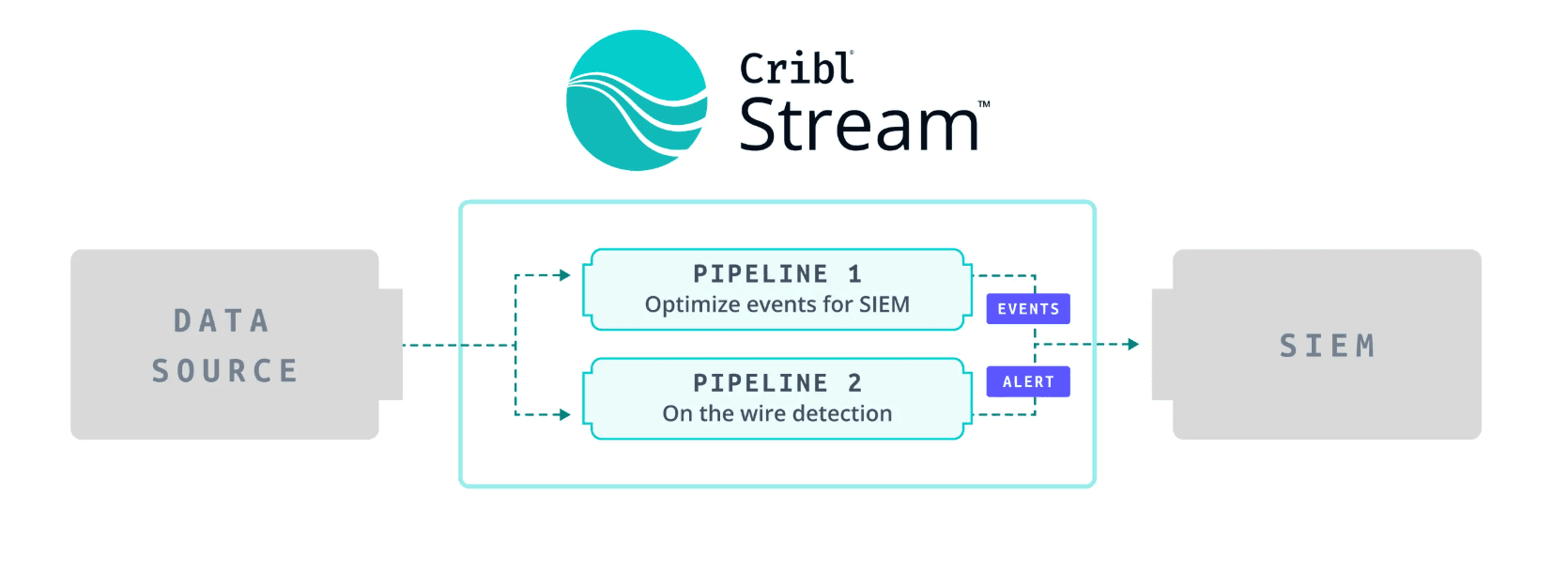

Figure 2. Basic diagram of “on-the-wire detection” inside a data pipeline in Cribl Stream

Sigma rules-based detections in the Stream

Sigma is an open, vendor-agnostic detection language that expresses attacker behavior rather than product-specific alerts. I like Sigma’s durable abstraction layer: a single detection logic that survives tool changes, storage tiers, and time itself. I useSigma for this approach because I prefer behavioral consistency over indicator volatility. Indicators age out, feeds go stale, and adversaries rotate infrastructure, but a well-written Sigma rule continues to fire across hot telemetry, warm logs, and cold archives as long as the behavior persists.

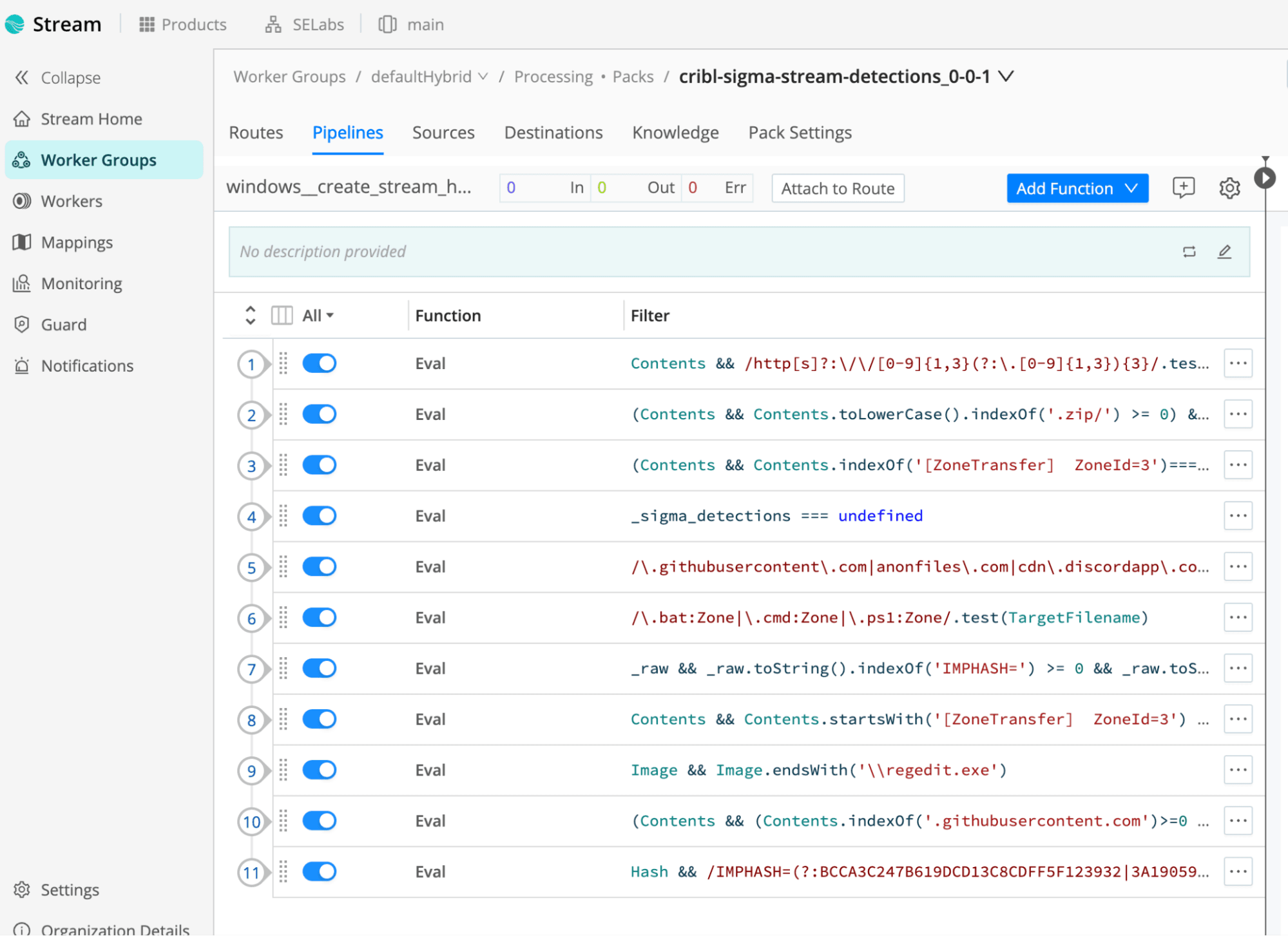

Imagine a PowerShell process flagged in an EDR event or Windows event log. The pipeline normalizes the event, checks it against threat intel, tags it with Sigma rule ID, and clones it to an alert index or analysis dataset. When analysts pivot to warm or archived firewall logs, preserved Sigma IDs and enrichment tags enable immediate pattern recognition across data tiers. This continuity eliminates manual event rehydration while simultaneously exposing persistent threats that span multiple retention periods. Pipelines can make up the first tier in time, a dimension that enables efficient hunting across all other data tiers. Here are the mainsteps required for basic threat detection with Sigma rules in a Cribl Stream data pipeline:

Normalization: Standardize fields like user, src_ip, dst_domain, process_name, and file_hash so that Sigma rules can operate consistently.

Threat intelligence lookup: Cross-reference each event with high-confidence IOCs from local look-ups or APIs, tagging matches with source, confidence, and type.

Sigma tagging: If an event matches a Sigma rule, the pipeline adds metadata linking the event to the rule ID (sigma_id:SIG-EX-001).

Routing and cloning: Send enriched events to incident response streams, detection indices or archives while minimizing unnecessary duplication.

Hunting over time: Sigma rule IDs and threat intelligence applied to logs in the stream allows correlating these events across data repositories over time.

Figure 3. In the stream event normalization and sigma rule ID tagging inside a Cribl data pipeline

Integrate threat intelligence across time

Pipelines give you the workbench, and threat intelligence (TI) is the lens that brings context to every pivot across time. Without TI, a spike in outbound connections or a rare process spawn is just noise. With it, those same signals can reveal patterns, link seemingly isolated events, and highlight previously unseen adversary infrastructure — even in archives months or years old. As you gain intelligence investigating and hunting across data tiers, you have a standardized way of injecting that intelligence back into the data pipeline. A good, open resource for TI is provided by SANS Internet Storm Center via an API and can be leveraged quite easily.

In practice, this means two things:

High-confidence indicators should be embedded directly into the pipeline for fast, deterministic enrichment.

Lower-confidence or emerging feeds can remain as on-demand lookups for analysts exploring unusual activity.

By tagging every match with the source, confidence level, and type of indicator, analysts can quickly prioritize investigations and understand the provenance of every piece of evidence.

TI works hand in hand with Sigma rules and clear guidelines for how to set up and share threat intelligence across organizations (NIST provides some guidelines for this). When a Sigma detection fires, the pipeline can enrich the event with relevant TI, creating a layered signal that’s both rule-based and intelligence-driven. This dual annotation allows more precise cross-tier correlation: a match in hot telemetry can link directly to warm and cold events, with the TI tags and Sigma IDs providing the common thread. Analysts can pivot confidently, knowing that each historical hit is not just a raw log entry but a contextually enriched signal.

By embedding threat intelligence at the pipeline level and carrying it through federated searches, organizations gain a hunting workflow that thinks in time, not silos. Every pivot to hot alerts, warm logs, or long-term archives is accelerated and informed.

Thinking in time transforms threat hunting

Hunting across time isn’t just a conceptual exercise, it’s a practical strategy that transforms how security teams discover, investigate, and respond to threats. By shifting the focus from tools to time as the primary hunting dimension, your analysts can pivot seamlessly across hot, warm, and cold data, connecting signals that would otherwise remain isolated.

By using data pipelines and federated search capabilities, this approach can be made operational. They normalize events, enrich them with threat intelligence, tag Sigma rule matches, and route data so that every pivot in real-time telemetry or multi-year archives contributes to a coherent investigative narrative. Sigma rules provide a consistent detection language, enabling the same logic to apply across data tiers. TI ensures that alerts are meaningful and context-rich. Together, these components create a repeatable, structured hunting workflow that scales across both time and your infrastructure. Time is no longer a barrier, it is the dimension that empowers the modern threat hunter.