<> Use Case

Reduce log volume and infrastructure costs

More signal, less noise. Send metrics, logs, and traces to the most cost-effective destinations while optimizing costs on licensing and infrastructure. By removing extraneous data and filtering noise, organizations can focus on high-value telemetry without impacting compliance.

The Challenge

As environments expand, telemetry volume (especially logs) grows exponentially. AI adoption is further accelerating that trend. As a result, you’re struggling with filtering low-value data without losing critical insights, while managing storage and ingestion costs effectively as data retention requirements grow.

the solution

Make room for the data that matters most

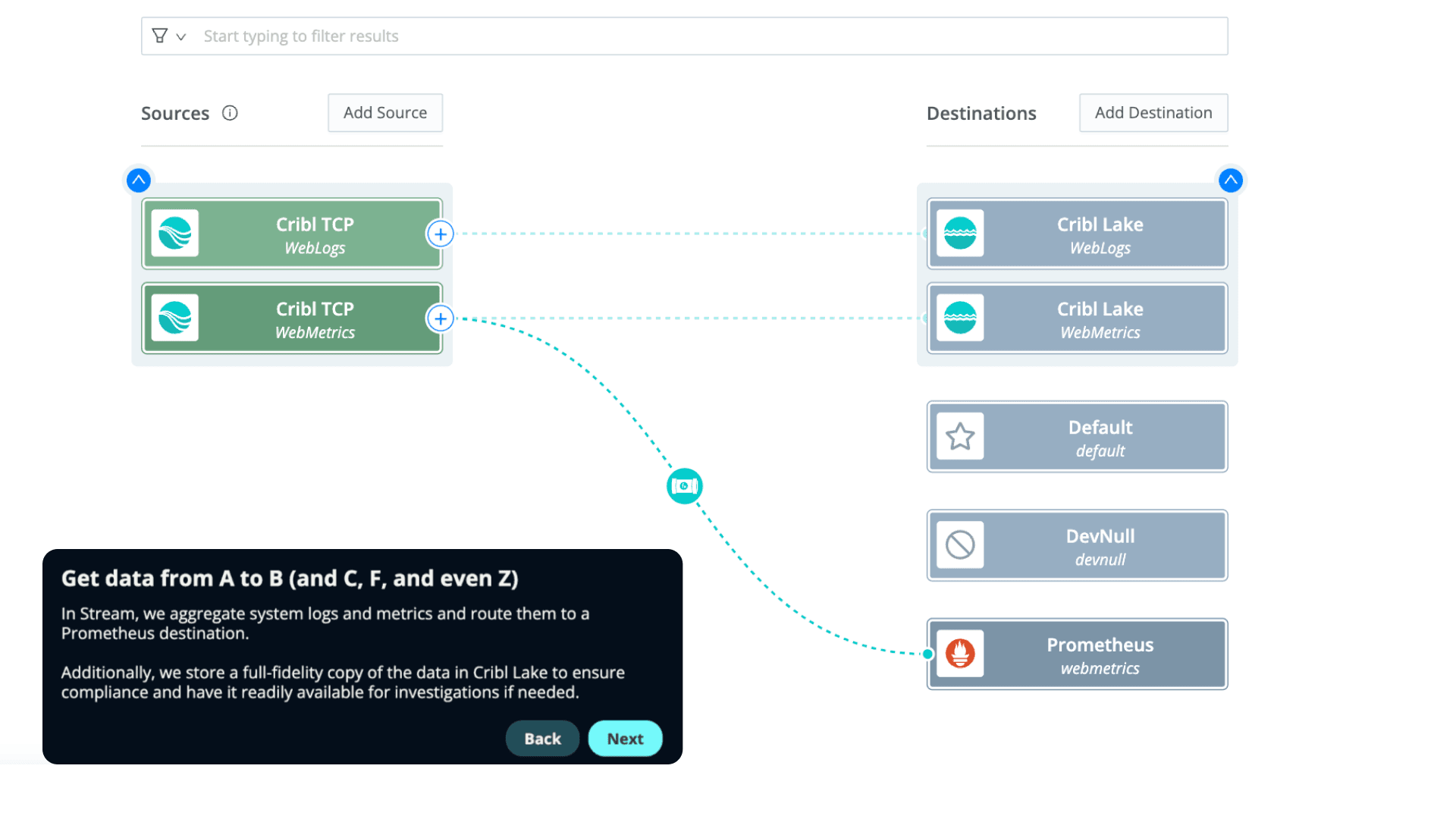

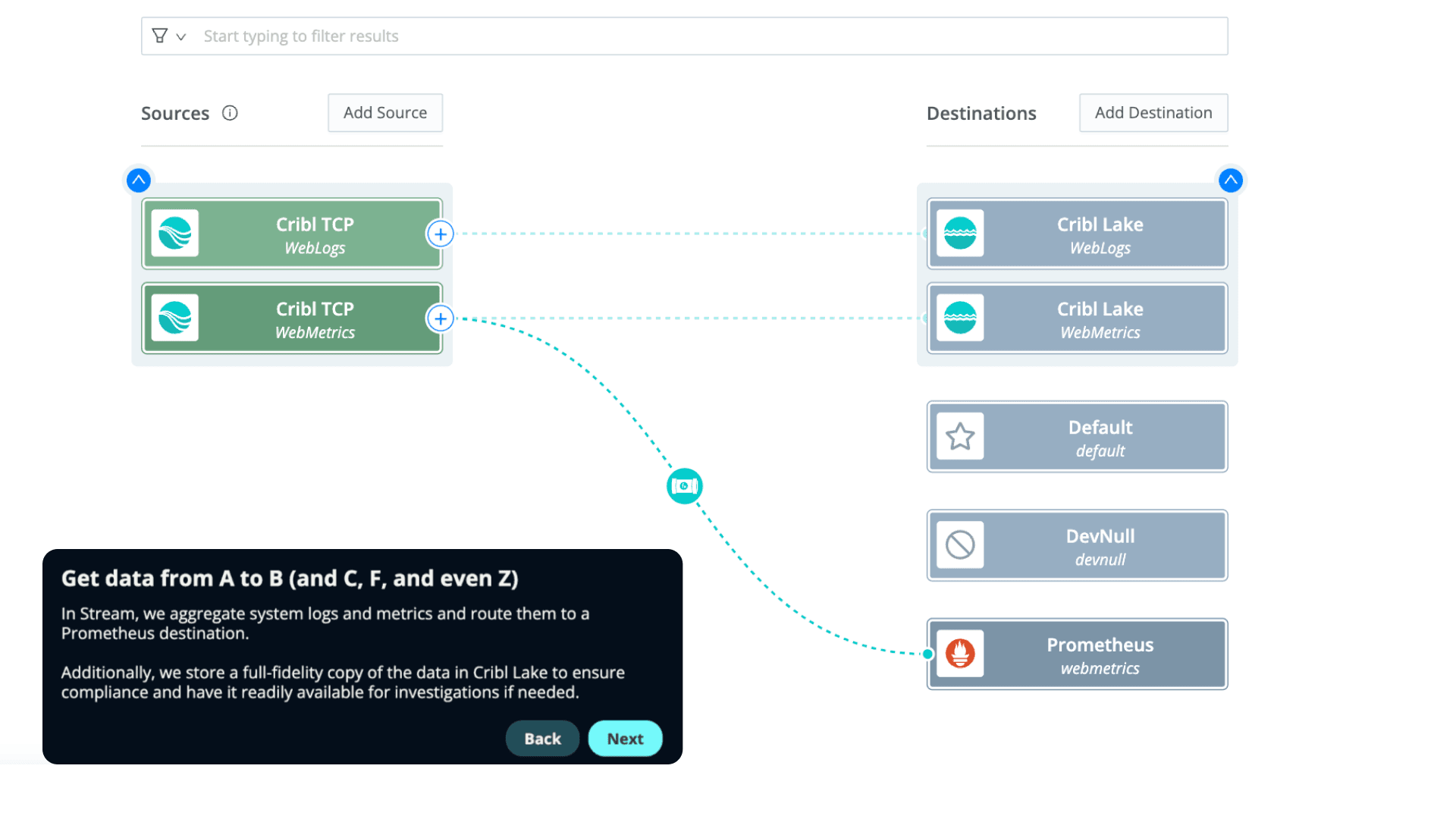

Curb the exponential cost curve of data retention by optimizing storage and indexing strategies for maximum efficiency. Send high-value data to systems your team depends on. Keep all your data in low-cost storage, such as a data lake, for easy retrieval and to maintain compliance.

Efficiently handle telemetry coming from multiple sources, streamlining your operational processes. Cleaner, streamlined data leads to faster investigations, optimizes the operations of AI SOC and AI-SRE agents, and enables better decision-making.

Efficiently handle data coming from multiple sources, streamlining your operational processes. Convert verbose logs into metrics. Remove repeated log entries, drop low-value fields from events and logs. Reduce tool ingestion by 50% or more. Maintain full-fidelity copies in your data lake.

You don’t have to love all your telemetry equally. Categorize data for optimized storage or elimination, ensuring cost-effective operations.

Customer success story

Integrations

Get logs, metrics, and traces from any source to any destination. Cribl consistently adds new integrations so you can continue to build pipelines to and from even more sources and destinations in your toolkit. Check out our integrations page for the complete list.

SEE IT FOR YOURSELF

In this interactive demo, you'll learn how to remove duplicate fields, filter out null values and low-value events, sample data, and convert log data into metrics for significant volume reduction – all while retaining a complete copy for compliance.

resources