<> Use Case

Route to multiple destinations

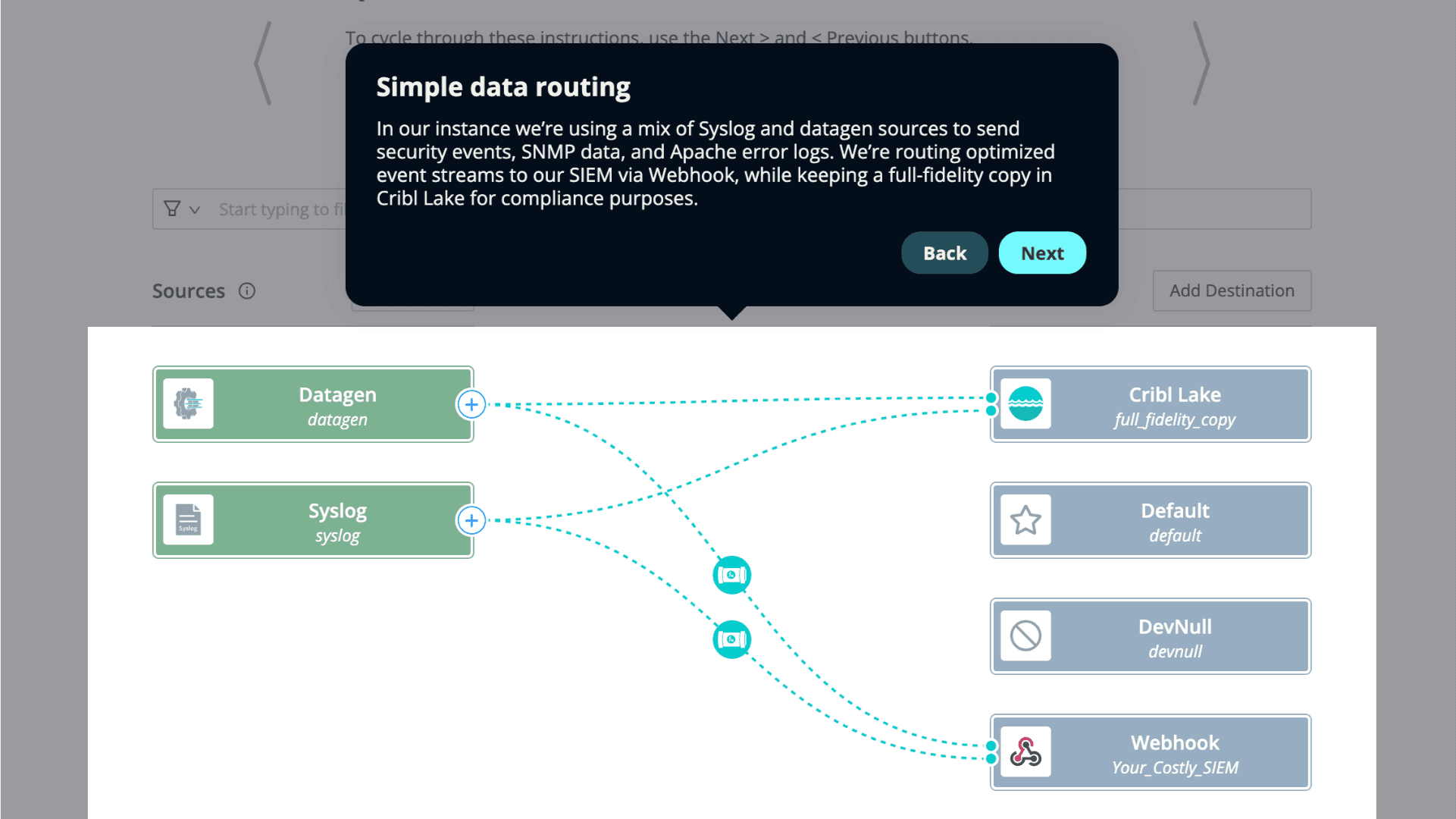

Easily route telemetry data from any source to any destination, or multiple destinations, whether cloud, on-premise, or hybrid.

The Challenge

As your infrastructure and app portfolio grows, so does telemetry volume, making it harder for IT and security teams to extract value. You need a solution that can seamlessly route data from any source to any destination, for operational efficiency and real-time insights.

The Solution

Simplify telemetry data routing like a boss. You got this.

Route telemetry from any source through pipelines that normalize, enrich, and filter data to fit the needs of each destination. Collect once and deliver it to multiple destinations for consistent analysis. Process logs, metrics, and traces in flight for quick and informed decisions.

Use Copilot Editor to convert your routing requirements into working pipelines. Cribl’s AI driven approach makes tasks easy, but keeps you in the loop.

Ensure your data adheres to strict security and privacy standards, for protection and compliance. Unlock vendor-neutral routing, processing capabilities, and customizable pipelines for secure data management. Govern data and manage access while masking personally identifiable information (PII) and supporting regulatory compliance.

Build pipelines to route to your analytics tools and low-cost data lakes. Adapt effortlessly to increasing data volumes, complexity, and types without losing performance for deeper insights and better decision-making.

Customer success story

Integrations

Get logs, metrics, and traces from any source to any destination. Cribl consistently adds new integrations so you can continue to build pipelines to and from even more sources and destinations in your toolkit. Check out our integrations page for the complete list.

SEE IT FOR YOURSELF

Data integrations between tools can be hard to set up and maintain, but with Cribl, routing data is easy. Check out this quick click-through demo to see how Cribl can easily route data between your monitoring tools, data stores, and more.

resources