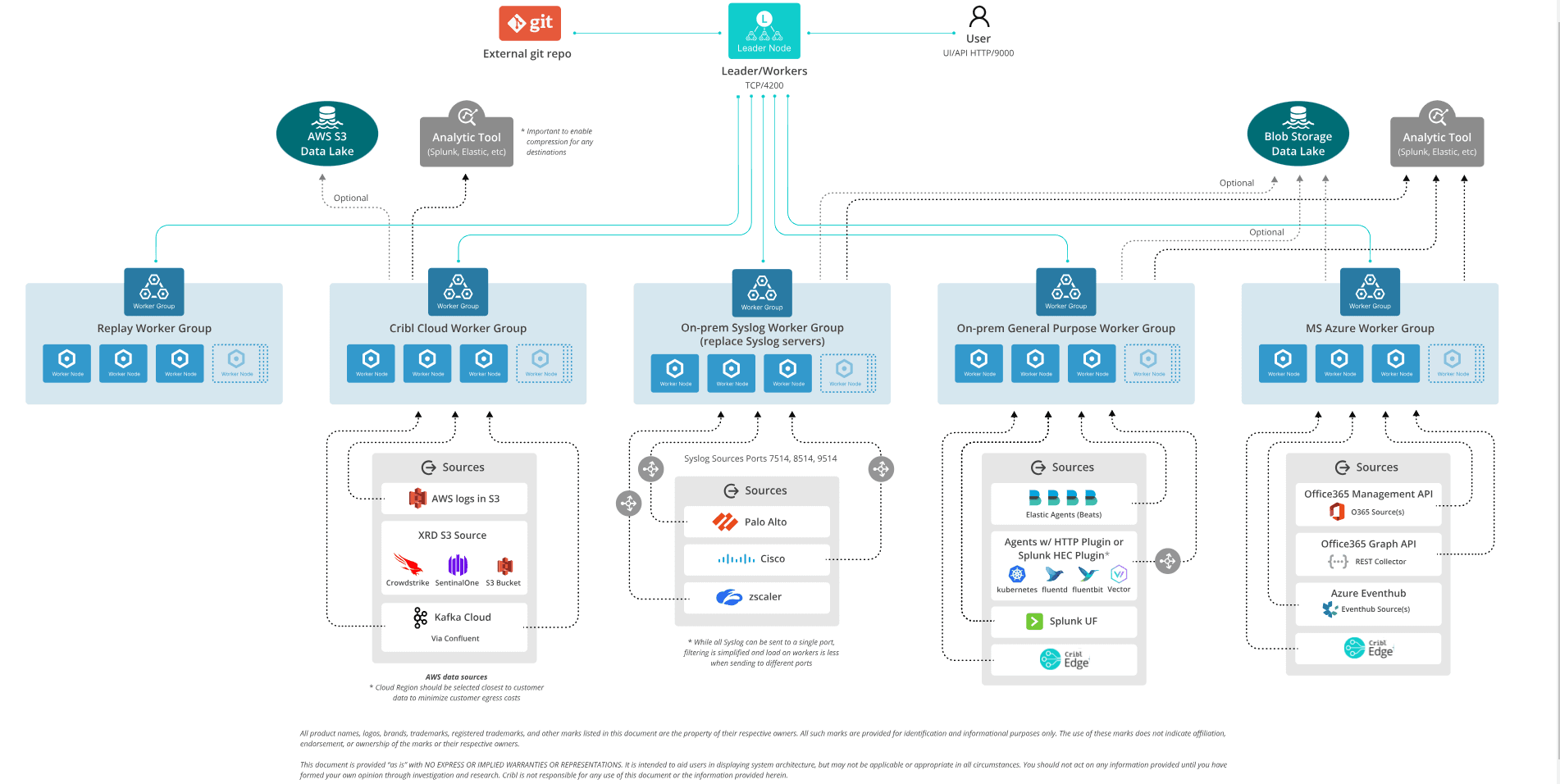

In this livestream, Ahmed Kira and I provided more details about the Cribl Stream Reference Architecture, which is designed to help observability admins achieve faster and more valuable stream deployment. We explained the guidelines for deploying the comprehensive reference architecture to meet the needs of large customers with diverse, high-volume data flows. Then, we shared different use cases and discussed their pros and cons.

Cribl’s Reference Architectures provide a way for admins to get 70% of the way towards deploying Cribl Stream. The sample environment below is a template for sending data to many destinations while minimizing data egress costs. It incorporates solutions to some of the challenges typical larger organizations might face.

MS Azure Worker Group

In this sample environment, the leader is up in Cribl Cloud and managed by Cribl. On the right-hand side, you’ll see an Azure worker group. There are two reasons to consider putting a worker group in a different cloud provider.

The first is to be as close to the data you’re collecting as possible. By keeping the data close, you can minimize the amount of processing necessary and cut egress costs. With this setup, you’re also reducing the risks of having competing workloads. Failing small is much better than failing big.

Additionally, when establishing a security or observability data lake, you don’t need to put all that data in the same data lake, S3 bucket, or blob storage. With Cribl, you can have them in different places and still be able to replay against all of that data.

We often see customers with Azure and AWS workers using Cribl-to-Cribl connectivity between the two clouds to exchange data. This way, they can avoid building custom code or dealing with the vagaries of exchanging data between clouds.

On-Prem General-Purpose Worker Group

The next worker group in our sample architecture above is an on-prem, general-purpose worker group. With this worker group, you can combine most of your data sources and have them go to one worker group in your data center. This is especially useful if you have a lot of Splunk universal forwarders, Cribl Edge agents, and Filebeat agents — you’ll want to send those to a dedicated worker group so you’re not competing for different workloads.

Another big reason for this approach is segmentation. For example, if you need to separate your PCI or PHI workflow, you can use this setup to break up your data or meet compliance requirements. If you need to upload that data to an Elastic or Splunk cloud, having the Cribl Stream worker group allows you to stage your data, manage it, and get it to those destinations.

Syslog Worker Group

Another architectural consideration worth looking into is having one Syslog worker group. This allows you to do your commit-and-deploys once instead of one region at a time. A lot of organizations struggle with the contention that high-volume Syslog causes. Adding an agent workload can make the situation worse, so having separate worker groups allows you to scale.

The difference between this worker group and others is Syslog groups have load balancers that will send data to the local workers in that data center. In Cribl Stream, there will still be one logical Syslog worker group to manage, reducing administrative burden and the maintenance required.

If you take one thing away from reading this post or watching the live stream, please DO NOT send your data to a single Syslog destination port! You’ll get the best results by getting as many workers involved as possible — do everything you can to avoid being pinned to a single core.

Cribl Cloud Worker Group

With Cribl Cloud, you will also get at least one worker group by default that you can allocate to all your AWS data sources — like in the sample architecture. But you can also send all of your cloud, on-prem, and other non-AWS data sources there. Either way, you won’t have to manage as much infrastructure. Instead, you can leverage the Cribl Cloud worker group and the Cribl Cloud leader if your use case allows for it.

This is especially important for threat surface reduction. Taking data in from multiple SaaS platforms means opening up your perimeter to everything that Cloudflare could produce, which is probably half the entire internet. Cribl Cloud can handle all of those threats and keep you secure.

Replay Worker Group

The last worker group in this reference architecture that people don’t typically consider is the Replay worker group. It’s a great practice to allocate your replays to a separate worker group, where the workload can be spun up and spun down — instead of on your production worker groups where you’re processing real-time streaming data.

Using your production worker group for replay can suddenly add terabytes of data to your existing live data flows and slow everything down. A minimal-cost, ephemeral replay worker group lets you scale up to meet your needs without interrupting your production workloads.

A recent customer took advantage of this by deploying their replay worker group in AWS ECS. As more data gets requested and downloaded, ECS spins up additional instances. The worker group scales larger as more data is retrieved and then scales down if there’s nothing to do.

Choice and Control Over All of Your Data

When you have multiple worker groups, you don’t have to worry about going to different places to manage them — it can all still be done by one Cribl leader. You can also have multiple data lakes and replay from all of them via one central location within Cribl.

This flexibility gives you complete control to make the best choices for you. So, if your security team wants to use Azure for its data lake and your operations team wants to use AWS, it’s no problem. Or, if you want to use one S3 bucket for forensics and another for yearly retention, you have that option available.

The best part is that all the data in your data lake is vendor-neutral. You can return that data to Cribl Stream using replay and send it to any tool you want.

Check out the full live stream for insights on integrating Cribl Stream into any environment, enabling faster value realization with minimal effort. Our goal is to assist SecOps and Observability data admins in spending less time figuring out how to use Cribl Stream and more time getting value. Don’t miss out on this opportunity to enhance your observability administration skills.