The rapid growth of observability data (about 25%-30% each year) presents challenges for enterprises that use this data. Most want to keep more data in storage for longer periods of time, but the costs associated force difficult decisions. How much data should we collect? How long should we keep it in storage? Where should we store it? This is tough, but we have some suggestions about how you can instrument and keep more observability data while saving as much as 50% on infrastructure costs. To understand how a storage unit for observability data might work, let’s explore an analogy about movie night. (It will make sense, I promise you.)

Would Someone Please Move that Snowboard?

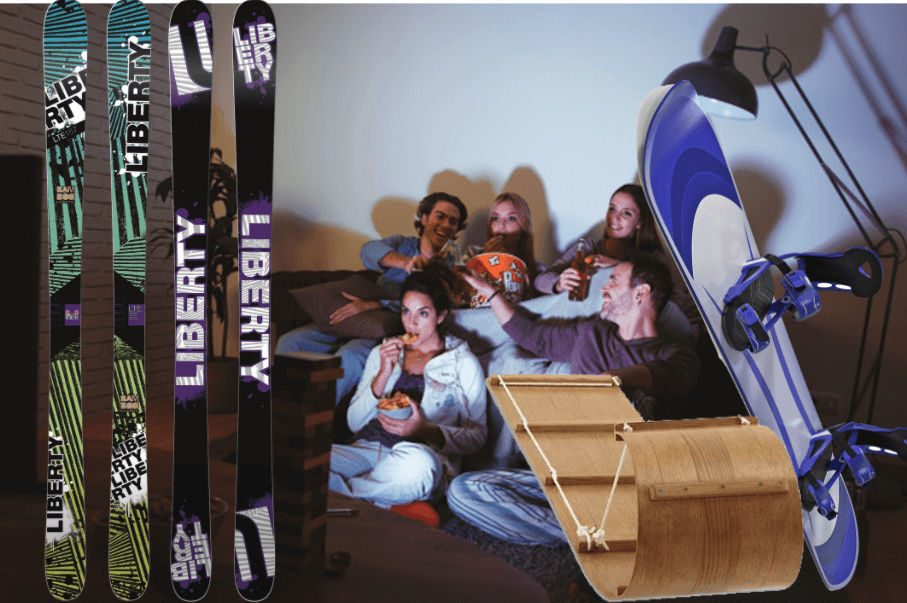

Picture it. It’s a warm, summer evening and you’re hosting movie night. You fill bowls with popcorn and snacks, and double-check the download of the movie everyone in your crew finally agreed to watch. Your friends and family are due to arrive any minute. As you bring a tray of drinks into the viewing room, you see it. Skis, snowboards, and what now feels like a super-sized toboggan are strewn around the room, leaned up against the couch and partially blocking your flat-screen.

You love winter activities almost as much as movie night. You simply don’t have room in your home to properly store all of the gear it requires – and buying a bigger house just to store all of your alpine gear isn’t in your budget. You could sell the equipment and hit up the rental place the next time the slopes call to you, but you love the way your stuff shreds the mountain. And that’s why you’ve laid ski poles across your sectional.

You check the time, and scramble to hide all of your snow gear into overflowing closets, under beds, and various corners of your home. The toboggan is the last item – you don’t know where to put it. The doorbell rings. You lug the oversized sled to the door and let in your friend who is early, for what seems like the first time in your relationship. She can barely get through the front hallway with the waxed, wooden monstrosity standing between the two of you. She offers to help you toss it on top of the bed in the guest room. Without asking, she texts you the name of a storage unit and tells you that it’s clean, cheap, and just about a mile down the road.

If you’re thinking to yourself – this seems vaguely familiar, but I prefer vacations on a beach, and my snorkeling equipment and sandals all stow nicely away in my Marie Kondo-influenced storage spaces – maybe something else is causing feelings of deja vu.

Your Logging Tool Isn’t a Data Lake

Your logging tool wasn’t designed to be used as a data lake. A lot of you, however, might be using it as one. I’ve spoken with several customers recently who say that they are using their SIEM or other log analytics tools as a data lake. This probably made more sense a few years ago, when the amount of data was a lot more manageable than it is today. Now, however, it’s frankly too expensive to retain your data in the tools you use to analyze it. For more on why log tools consume too many resources, read this article by our CEO, Clint Sharp.

There is good reason to keep data around for longer retention periods. Analyzing trends, conducting investigations, and meeting stringent compliance requirements are just a few. The systems you use to analyze data simply are not the best places to store it. The vast majority of queries in logging systems are on data from the past few days. Queries on older data happen, but they are often less time-sensitive. They don’t require that you store data in block storage, which can cost as much as 100 times more than cloud and other object storage options.

Keeping several months of observability data in the logging systems also hurts query performance. Adding compute power to combat this performance degradation has diminishing returns and escalating costs. The increased costs and bloated infrastructure outweigh the convenience of having this data on hand, and ready to analyze.

Storage Unit for Observability Data

There are lots of cheaper alternatives for long-term data storage. Object storage options cost significantly less than storing data in your logging infrastructure. These options include file systems, dedicated data lakes, and cloud solutions like AWS S3, MinIO, and Microsoft Azure Blob. Read Scalable Data Collection from Azure Blob Storage for an example. Using these choices for longer-term storage of observability data can be a fraction of the cost of keeping them in indexed logging tools.

Cribl® LogStream™ Replay lets you use object storage as a simple, efficient, cost-effective storage unit to keep data that you aren’t analyzing today, but may need later. Replay collects data from object storage and streams it to LogStream, where it is filtered, enriched, right-sized, and formatted for any analytics tool you need to use, whenever you want it. This is just like pulling your skis out of storage when news of fresh powder arrives. You don’t know what data you will need, or even if you will need it, but routing it to object storage for retention is a great way to avoid the bloated and expensive infrastructure associated with retaining it in your logging systems.

Keep a lot more, pay a lot less. To get a sense of how this works with LogStream, try one of our interactive sandbox courses that allow you to use the product in our demo environment. I’d recommend the course on Affordable Log Storage in S3. You can also join our community for more great tips from pros like you.