Working with data in Cribl LogStream is best done when live events flow through the system. In this post we’ll walk through how to generate sample data for the purposes of iterating through routes, pipelines, functions configurations as well as troubleshooting general issues.

In version 2.1 we introduced a new purpose-built feature called Datagens. Datagens replay template files a given rate (Events Per Second) and appear and behave exactly as regulars Sources i.e. their events can be routed, processed and outputted to a destination just like all other events. Several datagen template files ship with the product out of the box and others can be created from samples files or live captures.

Enabling datagens

To create a datagen, navigate to Sources > Datagens and click Add New. Select a Data Generator File from the dropdown – e.g., apache_common.log and set it at 5 EPS/worker process. Hit Save. Repeat for another, e.g., syslog.log and set it at 10EPS/worker process.

LogStream Sandbox

Learn about the features of Cribl LogStream in our interactive sandbox!

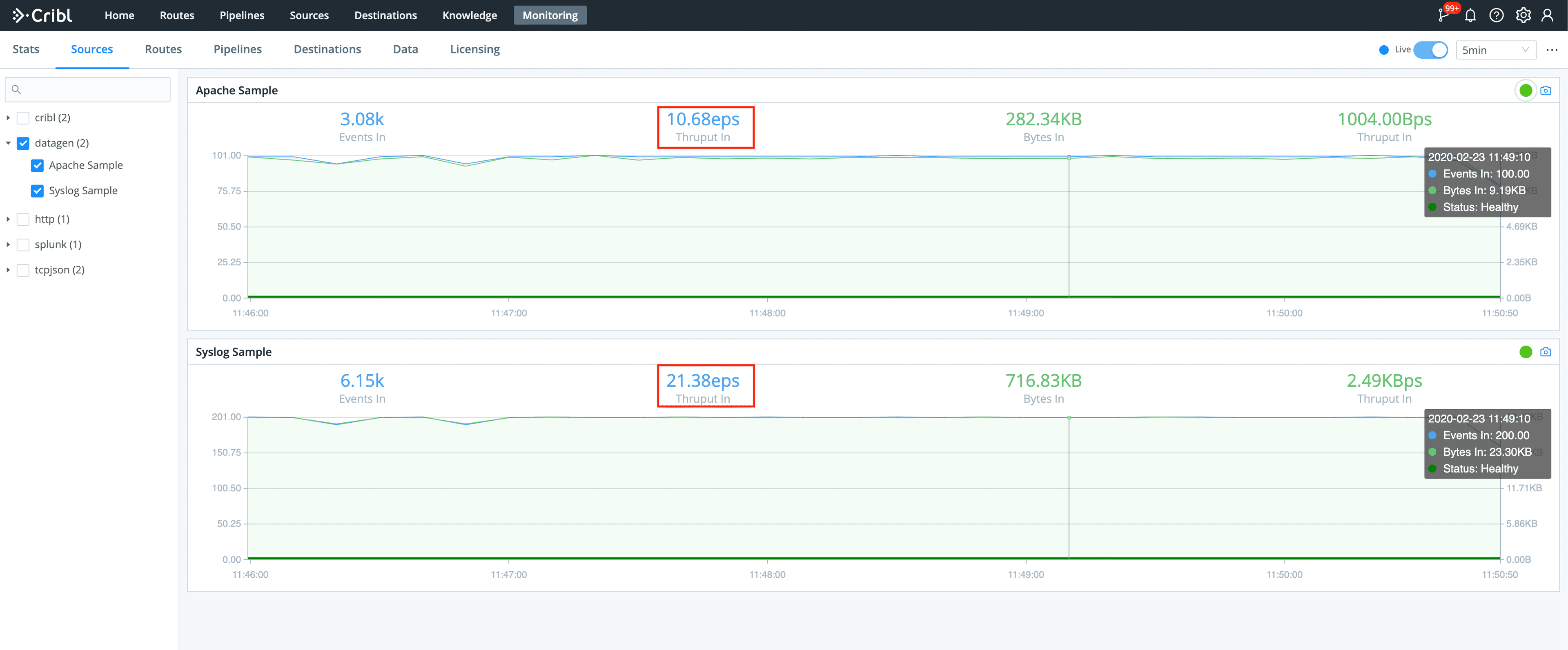

Next, go to Monitoring > Sources, select/search datagen and notice that both datagens are now delivering data at approximately the configured EPS rate (in my case I have two worker processes).

Yup, that’s it – from 0 to a 30EPS sample in less than 5min!

I want my own samples, possible?

Of course! The Datagen engine can easily generate and replay templates from custom events. Go to Preview > Paste a Sample and add a sample, say, something like these AWS VPC Flow logs:

2 123456789010 eni-abc123de 172.31.16.139 172.31.16.21 20641 22 6 20 4249 1418530010 1418530070 ACCEPT OK

2 123456789010 eni-abc123de 172.31.9.69 172.31.9.12 49761 3389 6 20 4249 1418530010 1418530070 REJECT OK

2 123456789010 eni-1a2b3c4d - - - - - - - 1431280876 1431280934 - NODATA

2 123456789010 eni-4b118871 - - - - - - - 1431280876 1431280934 - SKIPDATA

2 123456789010 eni-1235b8ca 203.0.113.12 172.31.16.139 0 0 1 4 336 1432917027 1432917142 ACCEPT OK

2 123456789010 eni-1235b8ca 172.31.16.139 203.0.113.12 0 0 1 4 336 1432917094 1432917142 REJECT OK

2 123456789010 eni-f41c42bf 2001:db8:1234:a100:8d6e:3477:df66:f105 2001:db8:1234:a102:3304:8879:34cf:4071 34892 22 6 54 8855 1477913708 1477913820 ACCEPT OKFrom the Event Breaker dropdown select AWS VPC Flow to ensure that the pasted text gets broken properly (notice the Event Breaker on newlines) and each event gets timestamped correctly (highlighted purple). Next, click on Create A Datagen File.

Note: if your sample is different and you can’t find a proper Event Breaker you can create one by expanding Custom Breaker Settings section.

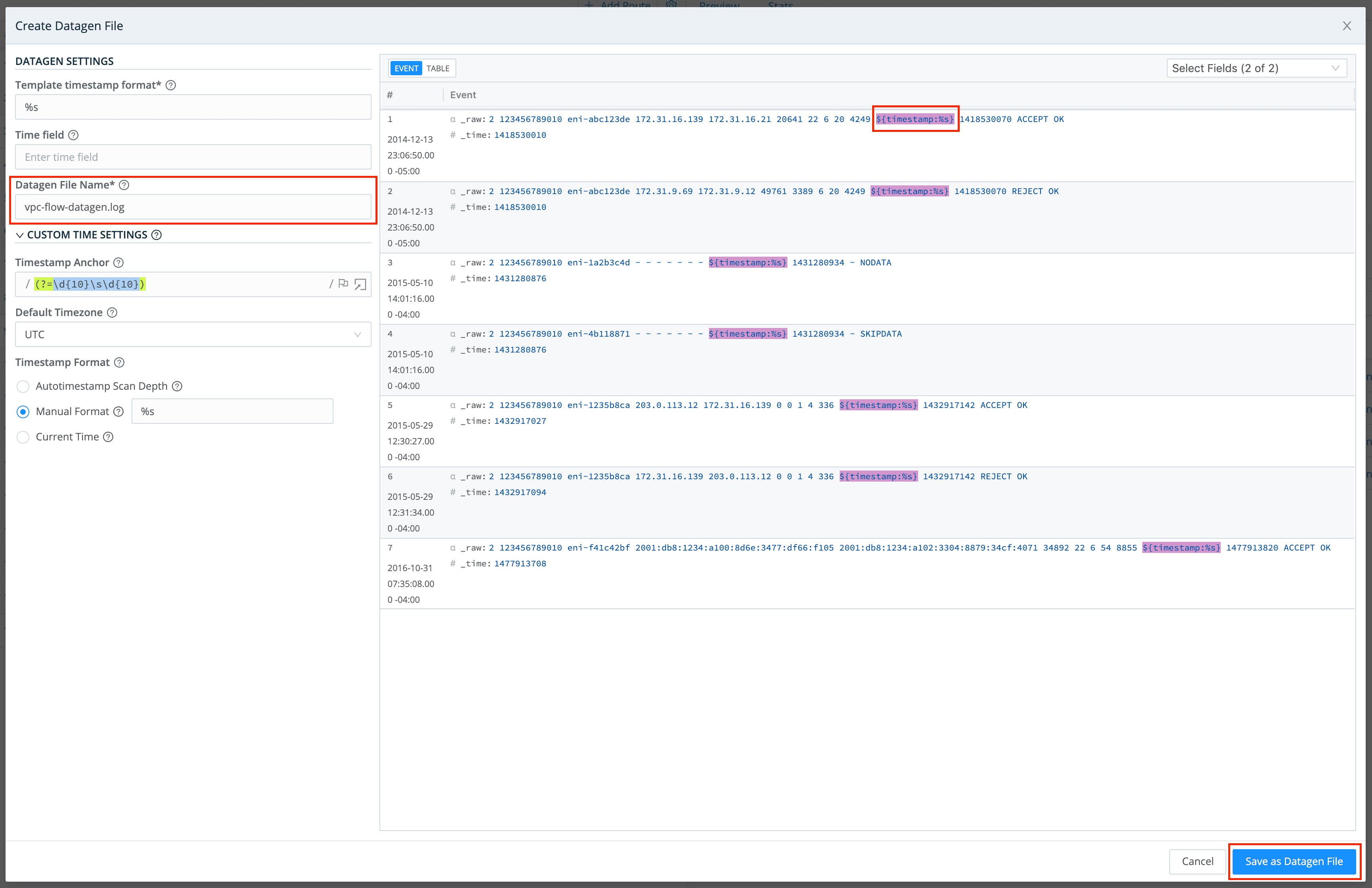

On the next screen, titled Create Datagen File, enter a file name, e.g., vpc-flow-datagen.log and ensure that the timestamp template format is correct: ${timestamp: %s}. ${timestamp: <format>} is a literal template string that the datagen engine uses to insert current time – in each newly generated event – in the given format. In this case %s is the desired strftime format for the timestamp (i.e. epoch). Click Save as Datagen File

To start using the newly created datagen file, go back to Sources > Datagens and define one as we did above.

Done!

—

If you’re trying to deploy LogStream on your environment or have any questions please join our community Slack– we’d love to help you out. If you’re looking to join a fast-paced, innovative team drop us a line at hello@cribl.io– we’re hiring!