Today’s enterprises must have the capability to cope with the growing volumes of observability data, including metrics, logs, and traces. This data is a critical asset for IT operations, site reliability engineers (SREs), and security teams that are responsible for maintaining the performance and protection of data and infrastructure. As systems become more complex, the ability to effectively manage and analyze observability data becomes increasingly important.

However, observability data is not just an asset, it can also be a liability for organizations. The growing volumes of observability data make it expensive to store and process. Additionally, observability data often contain sensitive information that must be governed and managed according to strict regulations. The number of products that generate and analyze observability data, including security tools, time series databases, and log analytics platforms, further complicates the management of observability data. It is critical for organizations to have a solution that can effectively manage and process observability data to avoid unnecessary costs and ensure compliance with regulations.

Why are Observability Pipelines Important?

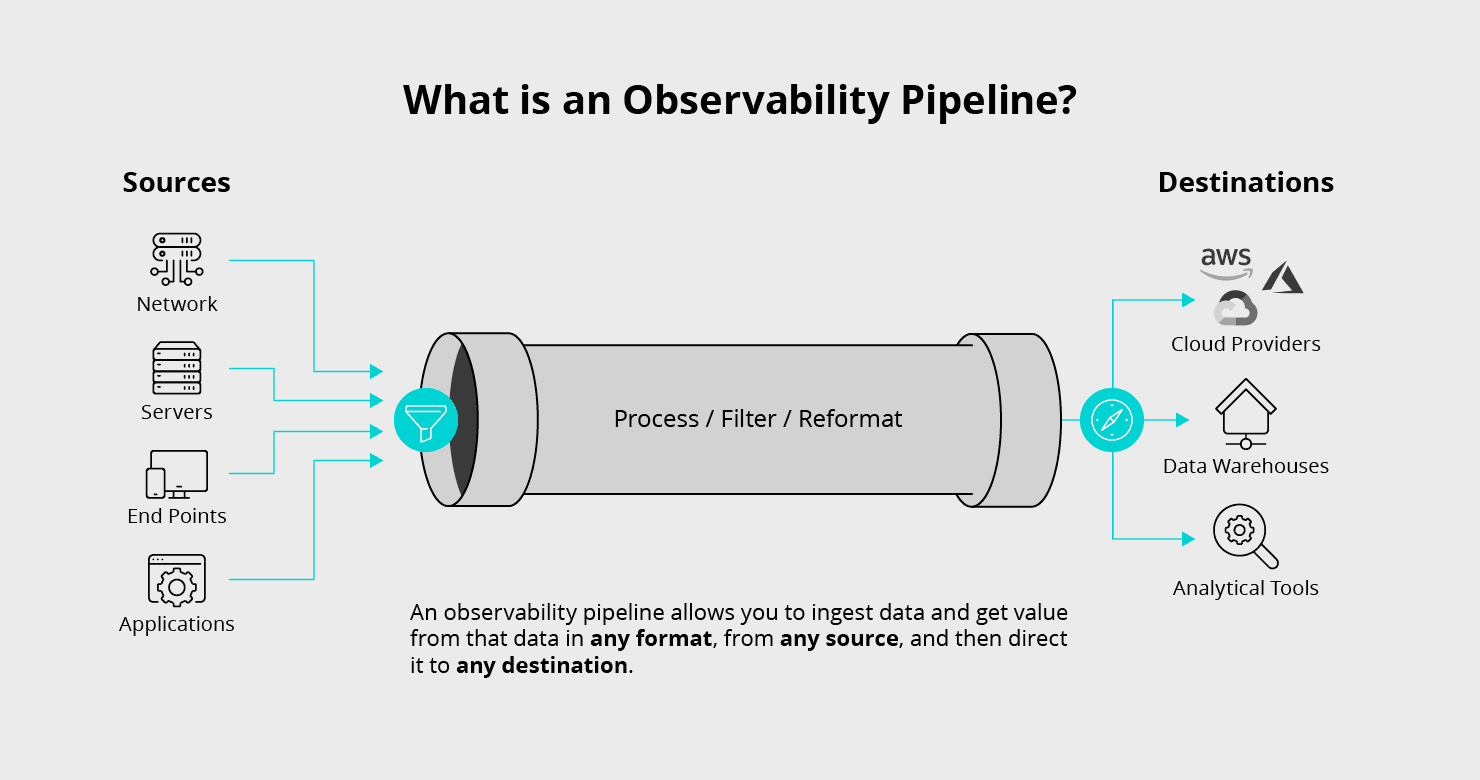

To address these challenges, observability pipelines have emerged as a preferred solution. An observability pipeline is a powerful stream processing engine that unifies data processing across all types of observability data. It collects all the required data, enriches it with additional context, eliminates noise and waste, and delivers the data to any tool within the organization. Observability pipelines provide a centralized platform for managing and processing observability data, making it easier for teams to detect and respond to issues quickly. They enable organizations to analyze and visualize large volumes of data in real-time, empowering teams to make more informed decisions about their systems. With observability pipelines, organizations can efficiently manage their observability data, reducing costs and ensuring compliance with regulations.

In the past, multiple tools have been used to replicate some of the features of observability pipelines. However, the introduction of purpose built products is accelerating their deployment and use. These products offer single console for managing observability data, and they have advanced features for data processing, analysis, and visualization. These products are purpose-built to address the specific challenges of managing observability data, making them more effective than tools cobbled together that likely aren’t well supported and will require significant maintenance. As a result, they are becoming increasingly popular for managing observability and security data across industries.

While IT operations were early adopters of observability pipeline capabilities, these tools quickly demonstrated value in security and data governance use cases as data volumes skyrocketed. Observability pipelines can help organizations manage and analyze large volumes of data in real-time, enabling them to detect and respond to security incidents more quickly. They can also help organizations ensure compliance with data governance regulations, such as GDPR and CCPA, by providing a centralized platform for managing observability data across multiple platforms. As a result, observability pipelines have become critical tools for security and data governance professionals in addition to IT operations teams.