When architects and engineers make a decision to adopt a new operational and security analytics platform, such as Exabeam SIEM and UEBA, they know they are either buying into the vendor’s ecosystem or they are going to spend a ton of time building custom integrations. More often than not, they buy the vendor with the biggest ecosystem since they don’t have the time to build and support endless integrations. Building and owning all the integrations are just not worth the engineering time. It is an old story, but with Cribl Stream customers now have the option to integrate the tools they want into their environment and are not limited by time-consuming integration challenges.

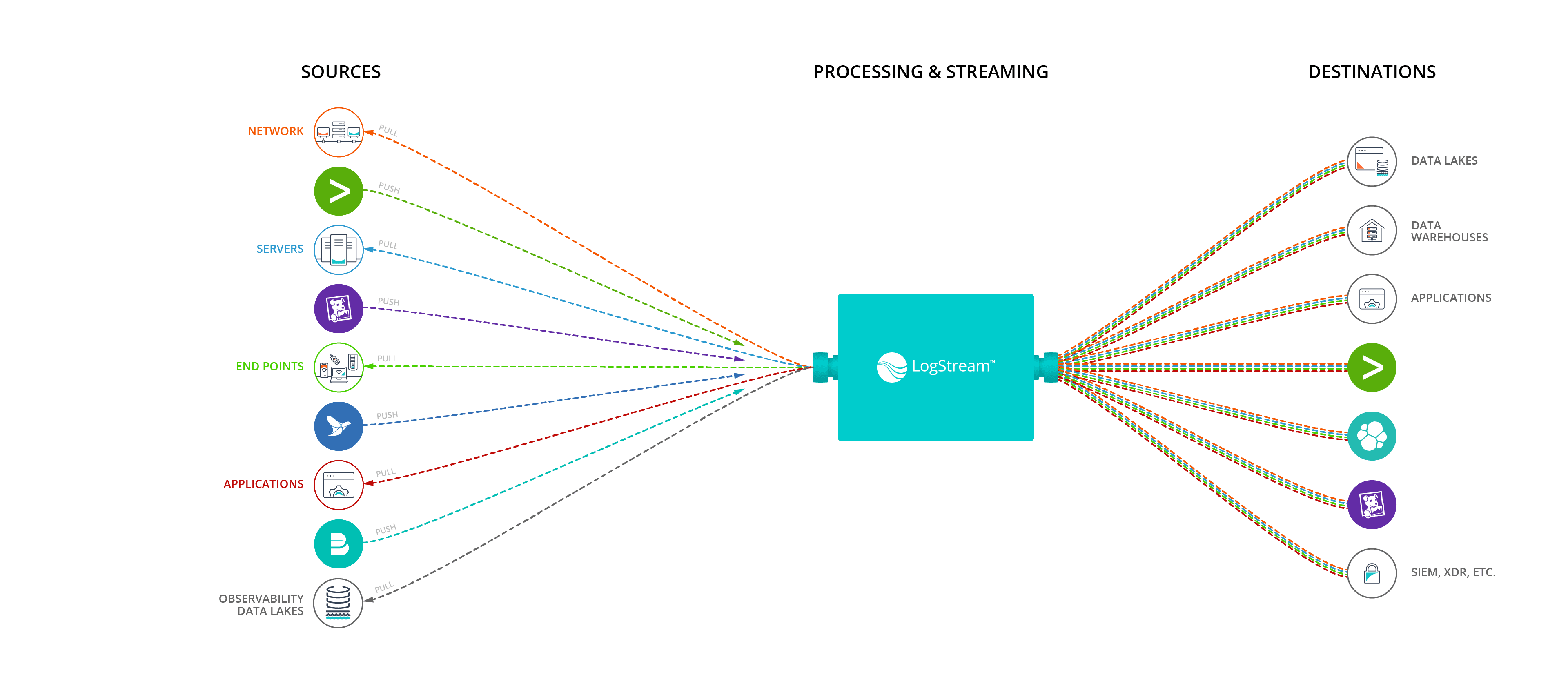

Stream makes the concept of the observability pipeline a reality that removes barriers to integration between tools.

An observability team can pick and choose best-of-breed tools. They can choose:

Splunk for operational logging

Splunk ES to power your SOC

Exabeam UEBA to prevent insider threats

ExtraHop to provide NDR

Tines as a SOAR platform

Stream is the glue that ties these products together as an effective cohesive solution with minimal engineering time spent on maintaining integrations. All the benefits of best of breed without the risk and time commitment of owning tool integration.

Mix and Match Operational Logging and UEBA Tools

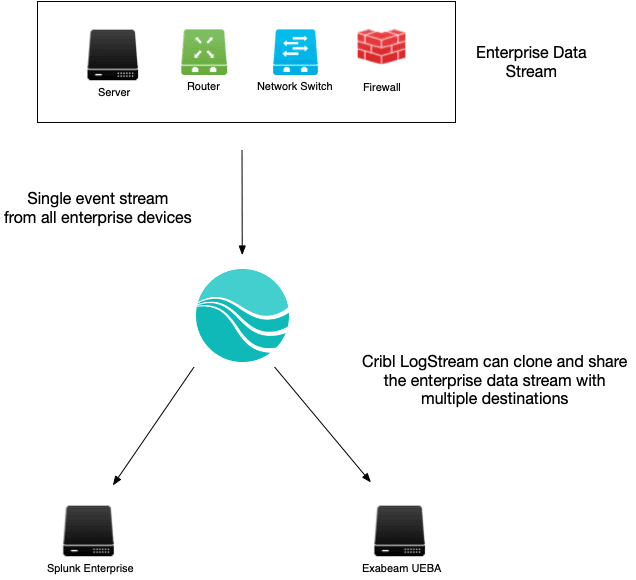

In my past life at Transunion, I owned Splunk to support operations and security observability. The security team proposed installing Exabeam UEBA solution alongside Splunk Enterprise to get a better UEBA solution than Splunk offered. I was very concerned since Exabeam was not in the Splunk ecosystem. Both tools need the same data, but how do we share the data given:

Splunk did not offer any reasonable integration options

Exabeam suggested installing its agents in parallel with Splunk, which would duplicate data that was not a nonstarter; the servers admins would put a hit out on me for adding a new agent to every server.

Exabeam could also query Splunk for its data, but that introduced significant latency and overhead into the Splunk environment.

I was very concerned about how we could achieve integration without impacting our overall observability solution.

Cribl Stream was the best option because not only did it make integrating Exabeam UEBA into our stack easy, but it de-risked adding a new tool to our observability stack. We could change the data feed into Exabeam UEBA at any time with no impact to Splunk. Stream removed Splunk as a dependency in the implementation. The implementation timeline went from months to weeks using Stream.

Architecture

Cribl Stream sits in between your data sources and destinations. For a Splunk shop, it would take feeds from the Splunk UFs and the rest of the environment and then pass that data to Splunk. When adding Exabeam to the data flow you would use:

A filter to identify your inscope Exabeam data such as Windows Security logging

A pipeline to alter the cloned data to fit the format that Exabeam expects from Windows Security logging

An output router that takes the data flow and sends the right data to Exabeam in the right format.

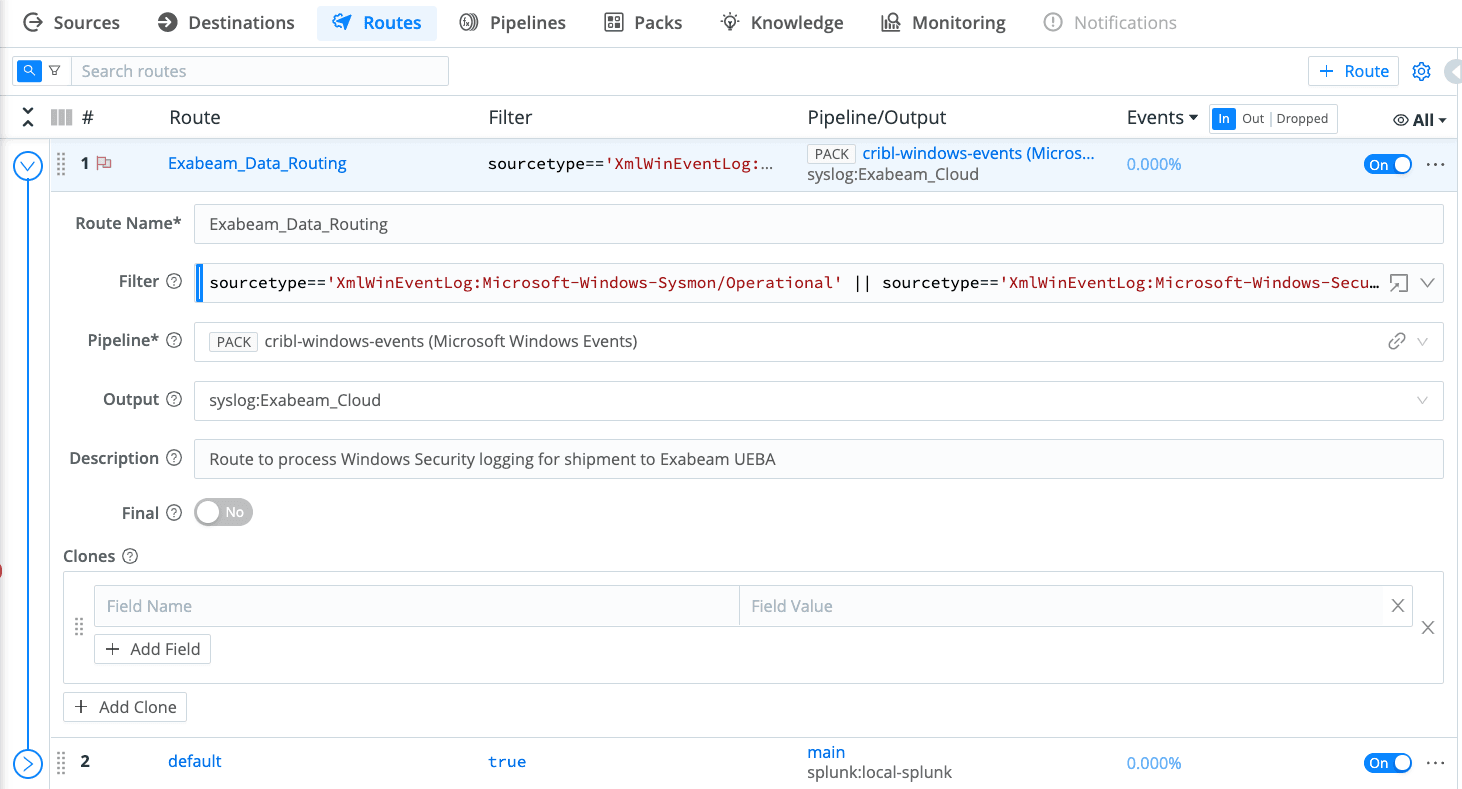

Stream Route for Exabeam Processing

The route is using 2 sourcetypes to create the filter, you can easily add more sourcetypes to the below filter as your UEBA program expands.

sourcetype=='XmlWinEventLog:Microsoft-Windows-Sysmon/Operational' ||sourcetype=='XmlWinEventLog:Microsoft-Windows-Security'The filter directs a copy of the data to the Exabeam processing pipeline and then to the Exabeam Destination. The event stream then continues down to the next route. Since the Exabeam route is cloning the data stream nothing is lost by sending data to Exabeam.

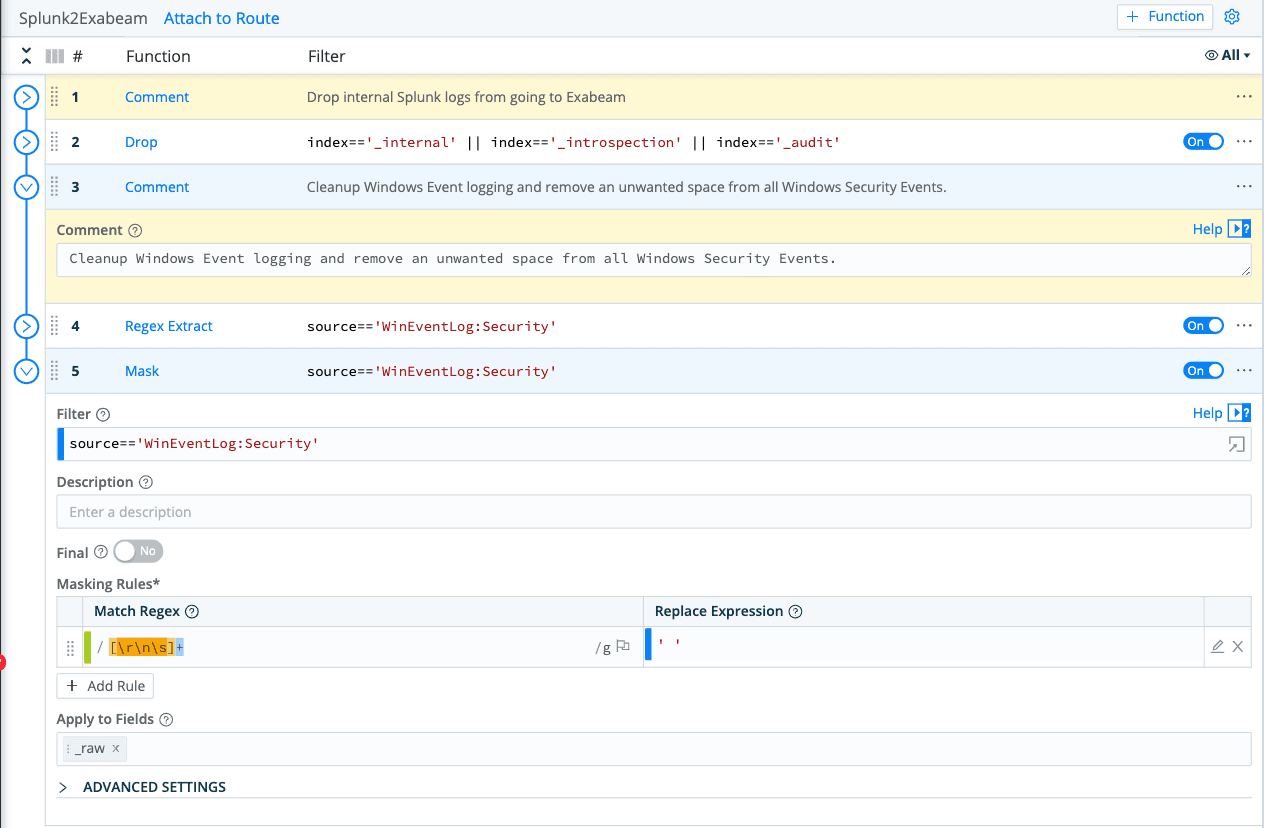

Stream Pipeline for Exabeam Processing

The Exabeam processing pipeline is where you make format changes so your data matches the default Exabeam parsers for Windows. Transforming your data to match Exabeam’s default parsers will shorten your development cycle and get your Exabeam toolset into production faster. Splunk’s Windows Classic data contains an extra space that Exabeam’s parser is not expecting. Your Exabeam pipeline needs a single regex pattern that will remove the extra space and enable smooth data processing.

Stream Destination for Exabeam processing

Exabeam Cloud uses a platform called a site collector to capture data. Its default ingest method is port 514 and uses syslog for most data feeds. Use the default syslog destination in Stream to push data to your Exabeam Site Collectors. Consult Exabeam support if you need to push JSON formatted data to Exabeam Cloud since it requires a slightly different approach where you need to update your site collector configuration.

I would highly recommend adding data sources one by one while working closely with your Exabeam administrator to validate data and make sure the Exabeam parser works correctly. In addition, Exabeam’s machine learning can take up to 2 weeks to create a baseline so be prepared to make changes after initial onboarding. You will have to be patent as you validate each data source is working correctly.

Bottom Line

With a few lines of code, you can get the right data to Exabeam without impacting your existing logging solution. Your security stack improves without the risk of major disruption normally associated with installing a UEBA or SIEM platform. Your observability team is not overwhelmed with work either. Cribl LogStream enables significant technology like Exabeam UEBA with less effort and risk than otherwise possible.

Try Cribl’s free, hosted LogStream Sandbox. I’d love to hear your feedback; after you run through the sandbox, connect with me on LinkedIn, or join our community Slack and let’s talk about your experience!

The fastest way to get started with Cribl Stream is to sign-up at Cribl.Cloud. You can process up to 1 TB of throughput per day at no cost. Sign-up and start using Stream within a few minutes.