Cribl empowers you to take control of your observability, telemetry, and security data. Wherever your data originates from, wherever your data needs to go, and whatever format your data needs to be in, Cribl gives you the freedom and flexibility to make choices instead of compromises. Addressing visibility gaps by ingesting more data sources as the threat surface continues to expand has been a challenge. Cribl Stream as a modern observability pipeline addresses this challenge by replacing legacy logging infrastructure with a single centrally managed console routing data to the appropriate systems of analysis and retention. Endpoint Detection and Response (EDR) data is arguably one of the most important data sources within the Security Operations Center (SOC) and we are going to explore the value that Cribl Stream brings to the table with the EDR data from SentinelOne.

SentinelOne Singularity EDR/XDR protects organizations by providing real-time detection, protection, and response capabilities. The endpoint data, alongside other XDR relevant data, is sent back to the SentinelOne cloud-first Singularity XDR portal. This valuable data is stored within the XDR DataLake for threat hunting and correlation.

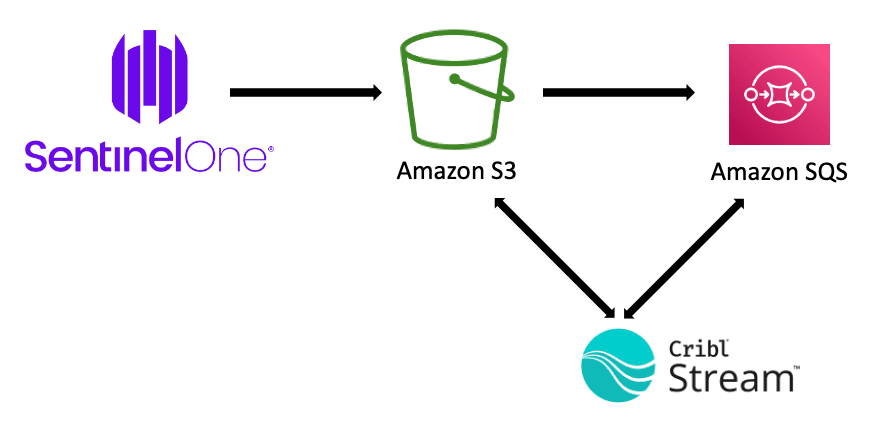

Many Enterprise, Managed Detection and Response (MDR) or Managed Security Service Provider (MSSP) organizations prefer to leverage this data within their own object store or analytic platforms such as SIEM or SOAR tools. There are two versions of Cloud Funnel that enable you to stream data to an Amazon S3 bucket where Cribl can be used to enrich, optimize, and route to one of more destinations. Cloud Funnel version 1 streams data to a SentinelOne owned AWS S3 bucket while version 2 streams the data directly to a customer owned AWS S3 bucket.

This Cloud Funnel streamed data provides deep visibility into the endpoint and is now more relevant than ever considering the move from corporate networks to a remote workforce. Many organizations depend on EDR data to quantitively address visibility gaps while aligning to a cyber security framework such as MITRE ATT&CK. This increased visibility results in a large increase in data volumes and processing requirements for enterprise SIEM/SOAR platforms.

Cloud Funnel version 2 consists of the following event categories:

Cross Process

DNS

Driver

File

Group

Indicator

IP

Logins

Module

Process

Registry

Scheduled Tasks

URL

The increased visibility provided by Cloud Funnel results in alerts containing rich security-relevant context which reduces the time Security Analysts spend triaging or investigating alerts. From a Threat Intelligence perspective, the SOC also has a much richer collection of possible Indicators of Compromise (IOC) due to the presence of hashes, domain names, IP Addresses, commands, registry locations, etc. These IOCs form the foundation not just for matching against known indicators but also for expanding existing threat hunting functions.

Suspicious activities aligned to MITRE ATT&CK techniques are often analyzed by threat hunters or detection engineers to examine behavioral aggregations, emerging threats, or entity relationships. For example, while the EDR data can be very noisy, even when aligned to MITRE ATT&CK, the process of identifying systems or users with activity spanning multiple techniques or tactics becomes very effective.

While quantitatively improving coverage of the MITRE ATT&CK matrix with EDR-based detections, the process of validating your controls becomes increasingly important as the Tactics, Techniques, and Procedures (TTP) leveraged by threat actors are constantly evolving. There are many vendors and tools providing Adversary Simulation services that are aligned to MITRE ATT&CK which effectively closes the loop on a continual validation process.

Cribl Pack for SentinelOne Cloud Funnel

The Cribl Pack for SentinelOne Cloud Funnel interacts with the S3-sourced EDR data provided by the SentinelOne Cloud Funnel service to transform, enrich, drop, and route events. This pack supports both version 1 and version 2 formatting of the Cloud Funnel data and contains dedicated pipelines for event types and categories. Each pipeline includes functions that parse, determine which fields to keep, drop events, enrich with reference CMDB context, and transform back to JSON.

The pack also includes sample data captures for every event type and category to ensure that you can transform and run test deliveries of Cloud Funnel data to your destination platforms prior to opening your production data feed. A compact version of the Majestic Million domain listing is included in this pack. Please note that the domains and rankings within this file are updated more frequently than this Cribl pack will be. Depending on your needs, you may need to update the lookup contained in this pack using The Magestic Million site. This context is helpful within certain event types or categories for determining whether interaction with well-known domains needs to be sent to systems of analysis/record when considering ingest cost, retention costs, or system performance. DNS is the most likely event type that might include a function to drop all DNS records from the top X domains when routing to your SIEM tool as they provide little value for most organizations from a detection, investigation, threat hunting, or threat intelligence perspective. Cribl Stream can always route full copies of this DNS data to a low-cost object store if desired then use the Replay functionality to access it later.

Cribl Stream Ingest

The following items need to be performed for Cribl Stream to pull data from the S3 bucket:

Configure AWS

Configure the SentinelOne Singularity console

Configure a Cribl Stream S3 Source tile

Configure AWS

You will need to use a combination of ACLs, Policies, and Roles within AWS to allow SentinelOne to write the Cloud Funnel data to your S3 bucket and to allow Cribl Stream to pull this data. You will also need to allow the S3 bucket to send notifications to the SQS queue to let Cribl Stream know when new data is available.

For those not yet familiar with configuring AWS S3 buckets, SQS Queues, and Identity and Access Management (IAM), we provide those details in a separate blog titled “AWS Configuration for Cribl Pack for SentinelOne Cloud Funnel”.

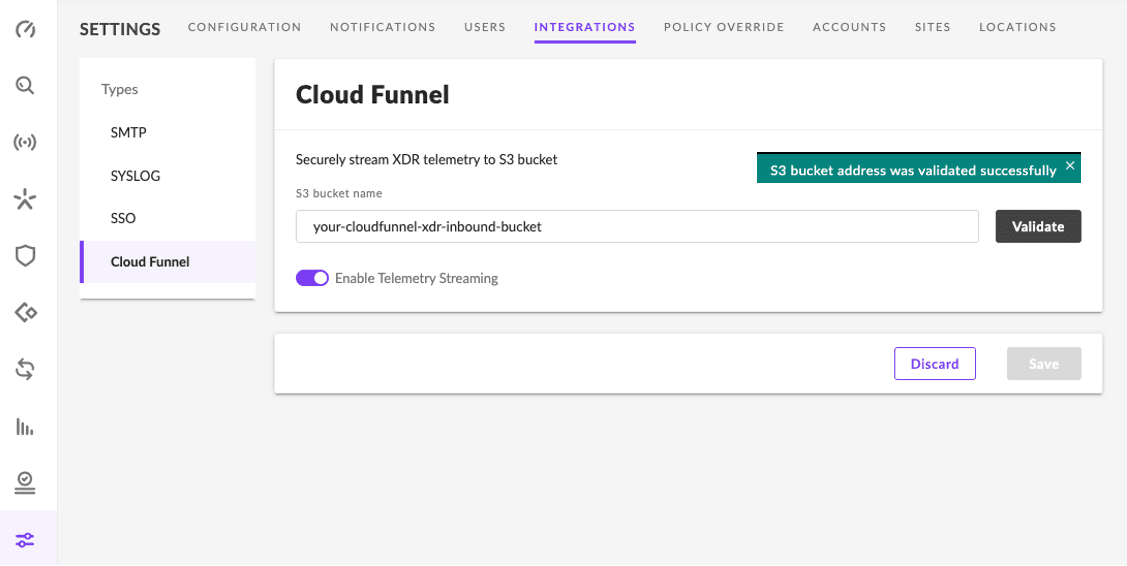

Configure the SentinelOne Singularity Console

Once Logged into your Singularity console. Select ‘Settings’ on the left, select ‘Integrations’ on the top, then select ‘Cloud Funnel’. After entering your S3 bucket name and clicking the ‘Validate’ button, you should see the below success message. Click the ‘Save’ button. If you have data flowing into SentinelOne Singularity, you should be able to check your S3 bucket and verify that objects are being written. Please refer to the blog referenced in the previous section titled “AWS Configuration for Cribl Pack for SentinelOne Cloud Funnel” to details on configuring the ACL on your S3 bucket that allows SentineOne to write to your S3 bucket.

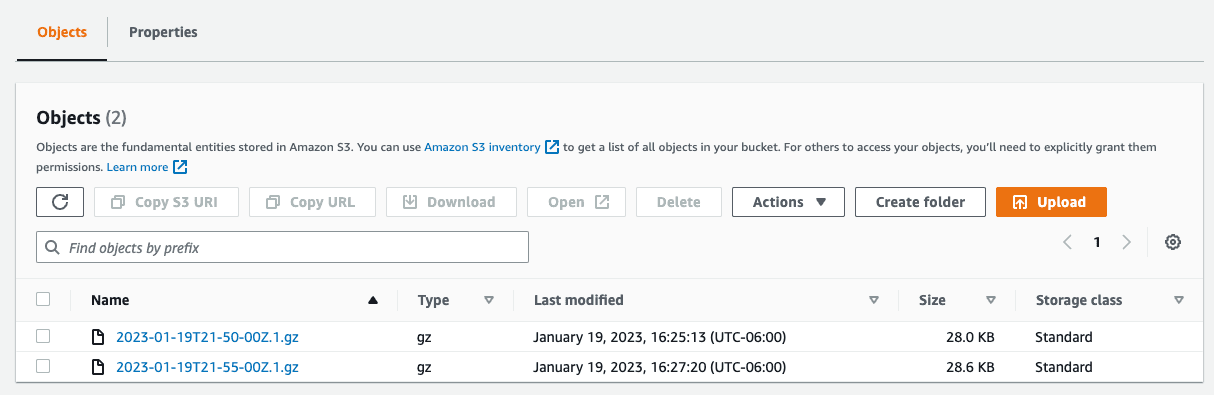

If you head over to your new AWS S3 bucket, you should now see objects being written. Let’s get that data over to Cribl Stream!

Configure a Cribl Stream Amazon S3 Source

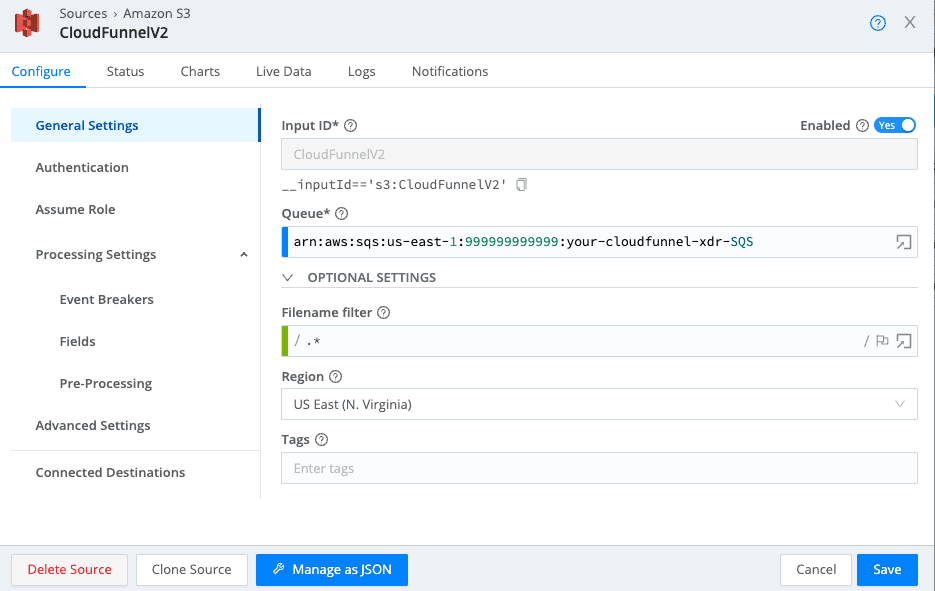

Select the ‘Amazon S3’ tile from the Data->Sources menu option within your Cribl Stream Worker Group then Click the ‘New Source’ button.

Provide an Input ID and populate the Queue text box with your AWS SQS ARN and Region as detailed below.

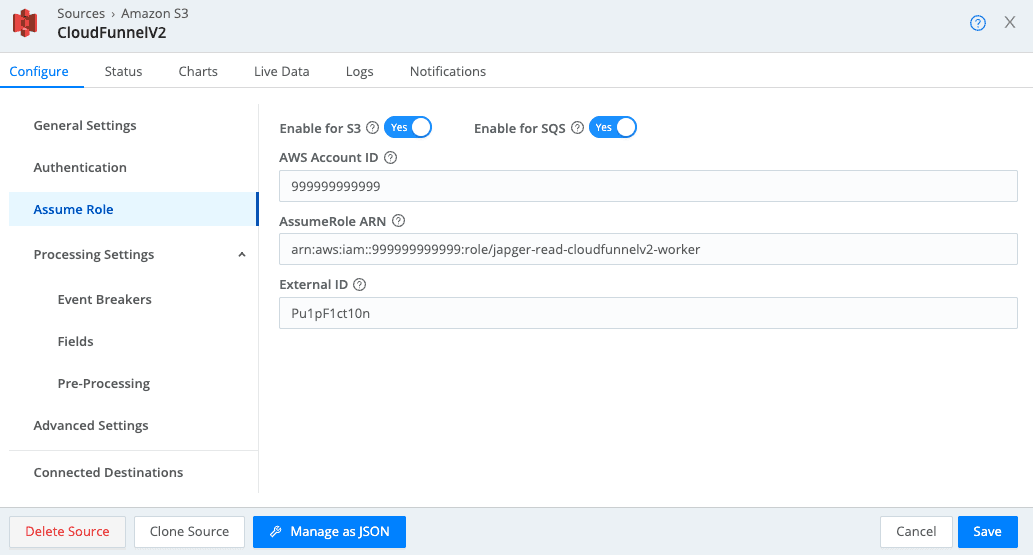

On the Authentication section, leave the Authentication Method set to the default ‘Auto’ method. Click on the Assume Role section and configure it as detailed below making sure to specify your AWS Account ID, AssumeRole ARN, and External ID. The External ID is optional but check this out for a bit of an explanation and a deeper dive into AWS Trusts and Cribl Stream.

Click on Save to complete our S3 Source configuration. Before committing and deploying this config change, keep in mind that data will be ingested and likely sent to your default destination per the worker group routing. Now is the time to update your routing to send it where it needs to go or send it to devnull for testing. It’s a big, beautiful data stream but you will almost certainly need to send it through multiple pipelines or the below pack for optimization before sending it to one or more destinations.

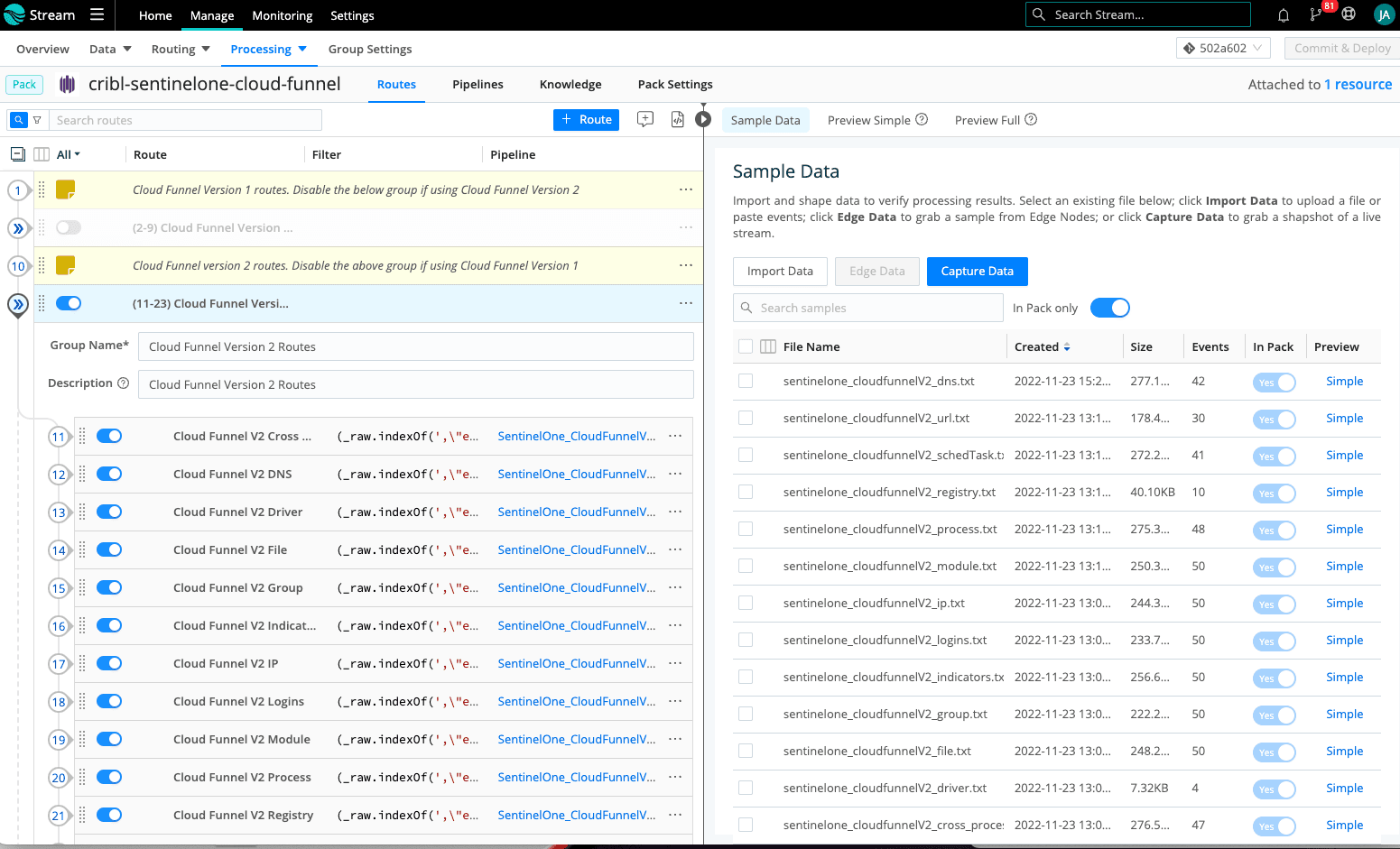

Install the Cribl Pack for SentinelOne Cloud Funnel

Download and install the Cribl Pack for SentinelOne Cloud Funnel from within your Cribl Stream Leader node. You will notice that the routing within the pack supports both versions 1 and 2 of the Cloud Funnel data. The pack includes sample data for every event type and category for both versions which will allow you to explore and prepare for your Cloud Funnel journey as you prepare to begin ingesting data. Each sample maps to a unique pipeline and the naming conventions make it easy to join them. As you examine the differences between the two versions, you will find that the version 1 structure employs a hierarchical JSON structure, version 2 is a flat JSON structure, and the field naming conventions are different between the two versions.

The below image details 2 groups in the left pane (one per Cloud Funnel version) containing the routing for all pipelines within each of the Cloud Funnel versions. Enable the group you are collecting and disable the other. You also see the sample data shipped within the pack on the right.

If you examine the lookups contained in the pack, you will see that we include a sample CMDB file that is referenced in each of the pipelines. I included it to provide you with a reference for how lookups function. Enrichments and normalizations, especially across the full spectrum of data types, are an important foundation component of your SIEM/SOAR. Give some thought as to how you will generate asset, identity, threat, or any other context and whether Cribl Stream uses a Redis approach or local lookup files. Cribl Stream provides a great deal of flexibility when it comes to enriching or normalizing data including the ability to use Collectors to build lookup files from the external systems on a recurring basis. There are numerous excellent Cribl blogs covering a variety of topics related to Cribl Stream that are worth the read.

The Majestic Million lookup and the other lookups in this pack that is prepended with “well_known” are there for the purpose of either risk ranking or dropping events that deal with well-known or trusted behavior. You can think of it as the opposite of traditional threat intelligence which focuses on possible high-risk or unknown behavior. The Majestic Million contains a list of the most popular DNS domains as a trusted indicator and the other lookups are placeholders for various other trusted indicator types within the EDR data such as registry keypaths, file context, commands, process context, etc. Cribl Stream provides the option to significantly reduce ingest and performance overhead on your systems of analysis by dropping events containing indicators specific to trusted behavior while possibly sending the complete EDR dataset to lower-cost object storage.

Pipelines

This is where the work gets done. We have pipelines specific to each event type or category. SentinelOne did an awesome job with wrapping all events into a manageable number of categories and they include enough endpoint/user context in every event to simplify the process of building a “live CMDB” should you choose to do so. The concept of a Live CMDB has been around for quite some time and it involves the use of a pipeline or platform to extract asset and identity information from EDR data into a lookup file. This dynamic lookup can now be leveraged to enrich or normalize all other data sources that may contain something like an IP address but lack hostname information. Events containing this host-specific context are more easily visualized and correlated across multiple data sources within your system of analysis. If you would like to learn the foundation for building a Live CMDB with Cribl Stream via a Redis integration, check out Ahmed Kira’s blog titled “Empowering Security Engineers With the Cribl Pack from CrowdStrike” where you will see how to update and query Redis.

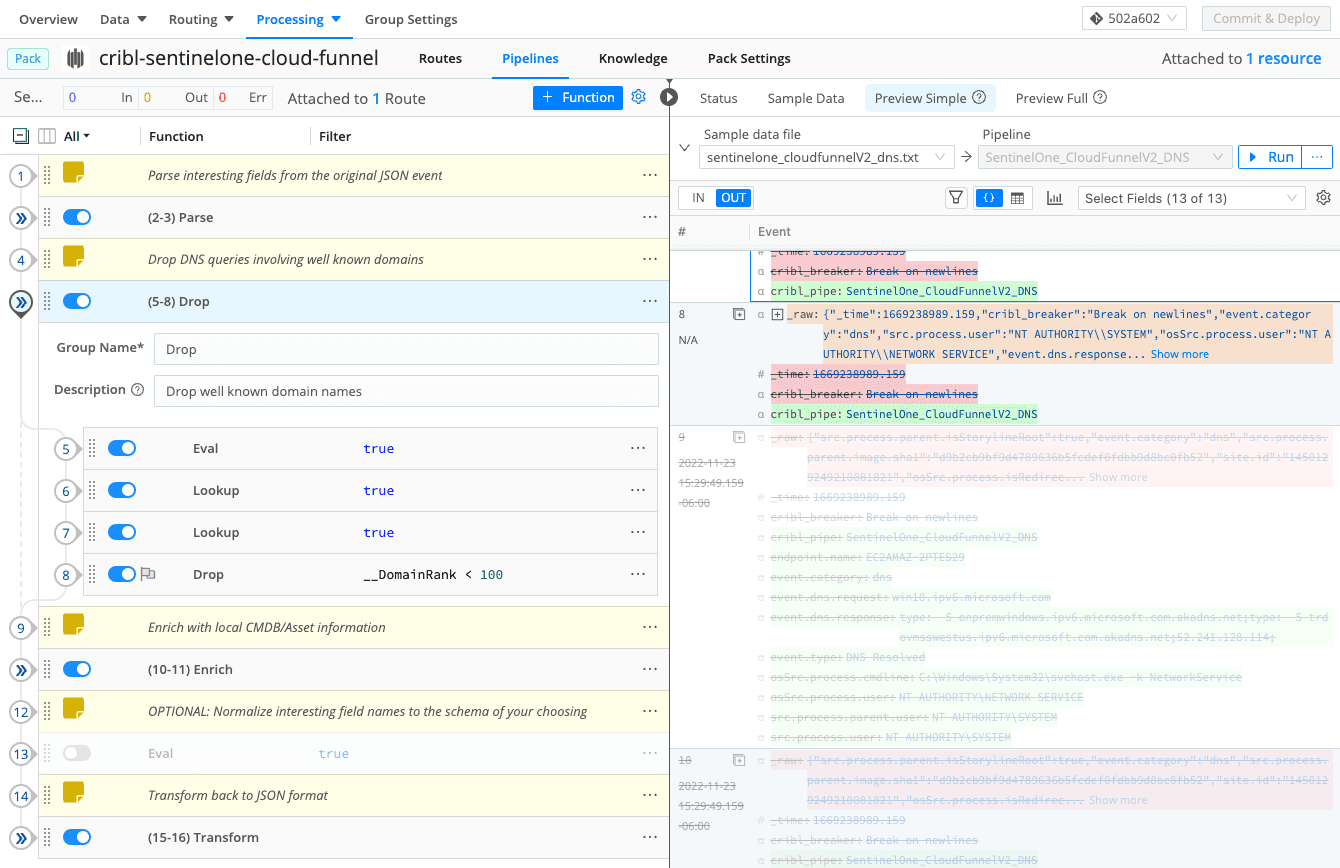

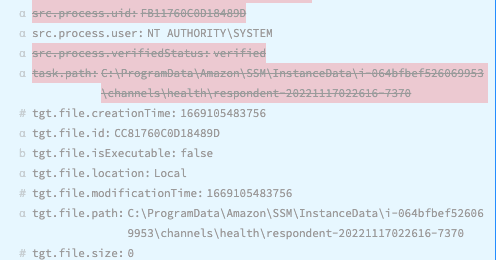

There is a bunch going on in the below image which highlights the functions in the pack specific to the version 2 DNS category of Cloud Funnel sample events. The first and last function groupings parse fields from the original JSON event and then transform them back to JSON after we are doing work on them. This EDR data is extremely rich for all the right reasons as the SentinelOne Singularity platform or MDR companies use it to correlate behavior using multiple and very complex mechanisms. Enterprise organizations can use this pack to determine exactly what fields should be preserved or dropped from the original event. If you use the Pipeline Diagnostics button to quantify what I am doing in the “Parse” function to specify which fields we keep, you will see that I am reducing the overall event length by over 95%.

I’ve expanded the “Drop” group to highlight that we are performing lookups on both 2nd and 3rd-level domain names and dropping the event if it’s within the top 100 well-known domains on the internet. Choosing your cut-off for dropping events based on the domain ranking will require a bit of testing and coordination with multiple groups within your SOC but you can often expect to drop 40-60% of your DNS related events right out of the gate with this standard approach. Events 9 and 10 from the image that have been greyed out are examples of events that have been dropped because of queries involving well-known domains. As an alternative to dropping these events, you can leave the lookup-generated domain ranking and then route to multiple destinations later based on that ranking.

The remaining functions perform simple asset lookups or act as a placeholder for functions that might perform an event transformation into the destination schema of your choosing.

Threat Indicators or Indicators of Compromise (IOC)

Your SOC likely consists of persons or groups performing several of the following activities: triage, investigations, detection engineering, architecture, threat intelligence, threat hunting, or adversary simulation. Cloud Funnel EDR data and Cribl Stream allow you to improve every one of these functions. It begins with Cribl providing you with the ability to define the fidelity of this data to best suit your security, compliance, performance, and cost needs. Detection Engineering quantitatively operationalizes your efforts to align to a cyber security framework such as MITRE ATT&CK. Create a CI/CD pipeline or use something like the MITRE ATT&CK Enterprise Matrix to track your progress by addressing visibility gaps and coverage of well-known tactics and techniques. These detections may need a layer of abstraction or risk weightings to turn them into actionable alerts but the insights they provide to an analyst are invaluable when it comes to triage and investigations.

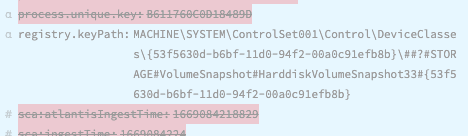

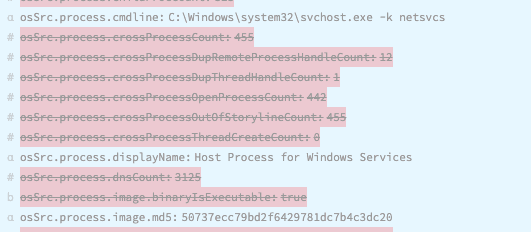

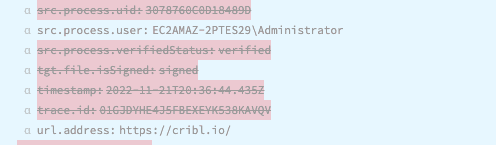

Many of your detections, threat intelligence, and threat hunting activities will utilize well-known IOCs from your EDR data as the trigger. See the below for a few examples of fields that might be examined as a source of IOCs:

Building an Adversary Simulation program within your SOC upon your complete collection of normalized data sources is a unifying effort across nearly all functions in the SOC. Attackers will constantly develop new techniques, evolve existing techniques, employ unknown techniques, or simply establish a foothold where a visibility gap exists. From a foundational perspective, organizations should continually and quantitively strive to address visibility gaps in a meaningful manner. This is easier said than done but leveraging Cribl Stream with Cloud Funnel data makes the ingest and operationalizing within the SOC of this critical data source more realistic and affordable than it’s ever been.

The fastest way to get started with Cribl Stream, Edge, and Search is to try the Free Cloud Sandboxes.